GDC 2026: A Personal Account

Introduction

Last week, I was back at GDC for the second year in a row personally (as an indie game dev wannabe). I have been coming on and off since around 2000s—not consistently, but enough times to have a sense of what the conference feels like when it is working and when it is not.

This post is also available on Medium. If you’re a paid Medium member and happen to read it there, it helps fund my next cup of coffee. Much appreciated ☕️😄

Heading into this year, I had reasons to be cautious. Last year felt a bit desolate — a gathering of survivors more than a festival or it was amplified by my own position as a refugee—from the game industry to a more stable online ads industry long time ago). And the pre-conference mood this time around was not exactly warm either. GDC rebranded itself as GDC Festival of Gaming and cut ticket prices significantly. The main Festival Pass was priced at $1,199, down from $2,499 for the equivalent All-Access pass in 2025 (and with the early bird rate I paid $649, which made the drop feel even more dramatic). The pass structure was simplified to just two main tiers: Festival Pass and a new premium Game Changer Pass (at $2,499, positioned as a VIP tier with lounge access and GDC Vault). Additional discounted rates were available for startups, indie developers, and academics. On paper, that reads as a positive move toward accessibility. But the announcement landed in a climate already dampened by the geopolitical situation, an ongoing war, and tightening US immigration policies that made (as far as I heard) many international attendees reluctant to make the trip. The changes felt more like a signal that something needed fixing than a reason to feel confident about the week ahead.

It turned out to be considerably better than I expected. The sessions felt genuinely vibrant—engaged audiences, rooms that filled up, speakers with real things to say. The exhibition halls were slightly smaller than last year, but denser and better organized, with more spaces designed for rest and informal conversation. Less sprawl, more energy. It felt like a deliberate choice, and it worked.

Part of what drove the mood, I think, was the return of actual game development content from named titles. Clair Obscur: Expedition 33, Ghost of Yotei, Cyberpunk 2077, Genshin Impact, Battlefield 6, Doom: The Dark Ages, Split Fiction—sessions from games people actually played, presented by the teams that built them. Last year that kind of content was sparse. This year it was a visible part of the program, and it brought with it the kind of developer who makes the hallway conversations worth having too.

Here are the sessions I attended this week.

Sessions

How AI Is Reshaping Game Creation (Presented by Google Cloud)

Session: Monday, March 9 | 10:10am – 11:10am | Game & Production Technology Track

Speakers: Catherine Hawayek (Google Cloud), Jeff Skelton (EA), Adam Smith (Unity), Muneki Shimada (Sony Interactive Entertainment)

This Google Cloud-sponsored session opened the day with an optimistic, industry-level view of where AI is taking game development. The panel brought together leaders from EA, Unity, and Sony—and the tone was consistently forward-looking. Two things stood out as genuinely useful.

The first was Jeff Skelton's framing of the entire history of game development tooling: it has always been about closing the gap between having an idea and getting it on screen. Every engine, every pipeline improvement, every new tool has been a step toward what he called "creating at the speed of thought." AI, in his view, is the biggest step in that direction yet. Not because it replaces anything, but because it accelerates iteration. Where a team once had two shots at something, they now have twenty.

The second came from Adam Smith at Unity. He described how the traditional seven-stage development pipeline (concept, prototype, greenlight, pre-production, production, live ops, post-production) is collapsing into three: Develop, Deploy, Grow. The prototype and greenlight phases are nearly merging. Non-engineers can now get a playable in minutes. That's not a small change to workflow; it shifts who can drive creative decisions and when.

That said, this was the kind of session that big-company panels tend to produce: broad strokes, strong conviction, light on specifics. I find this format hard to get much from. The questions were good, but not every panelist answered them—some used the space to say what they came prepared to say rather than engage with what was actually asked. The optimism felt genuine, but the substance was thin. That said, the direction itself—AI as a tool that expands who gets to create, and compresses the distance between idea and execution—I find genuinely compelling. The destination feels right, even if this particular session didn't give you much to work with on the way there.

Actionable Intelligence: What the SAG-AFTRA IMA Actually Means for Game Developers

Session: Monday, March 9 | 11:50am – 12:50pm | Audio Track

Speakers: Bo Crutcher (Blindlight), William "Chip" Beaman (Halp Network), Jennifer Hale (Independent), Patrick Michalak (Insomniac Games), Kal-El Bogdanove (Voice Over/Performance Capture Director)

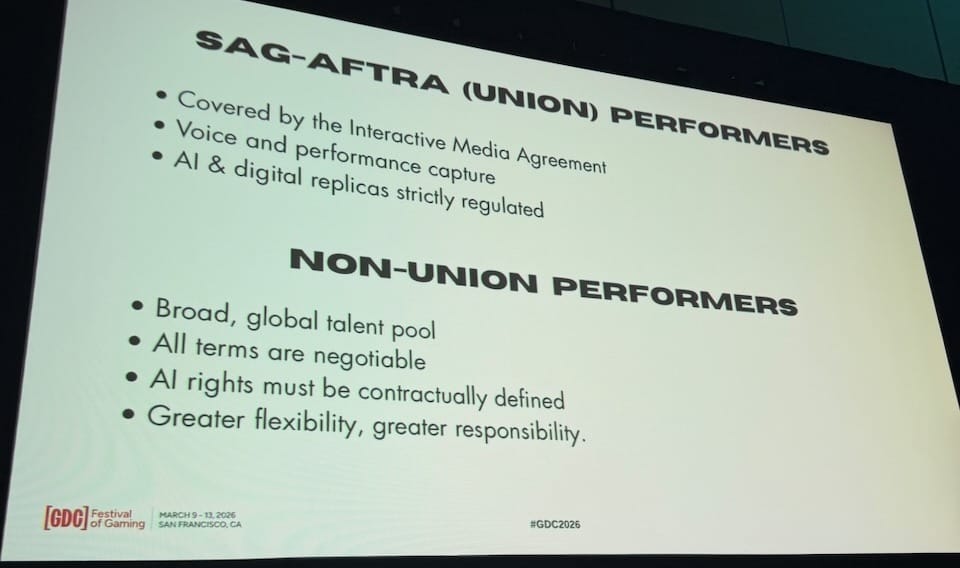

This panel brought together casting producers, a production services veteran, a veteran voice actor, a AAA dialogue manager, and a Voice Over/Performance Capture director to break down the SAG-AFTRA (Screen Actors Guild – American Federation of Television and Radio Artists) Interactive Media Agreement's (IMA) AI provisions into something developers can actually act on. If you work with voice talent (union or not) and AI is anywhere near your production pipeline, this is the session you didn't know you needed.

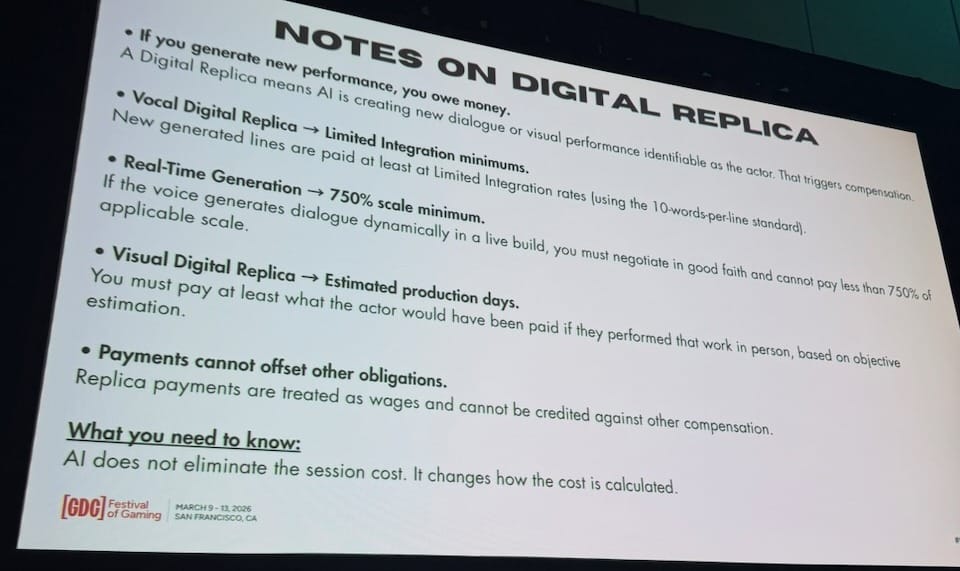

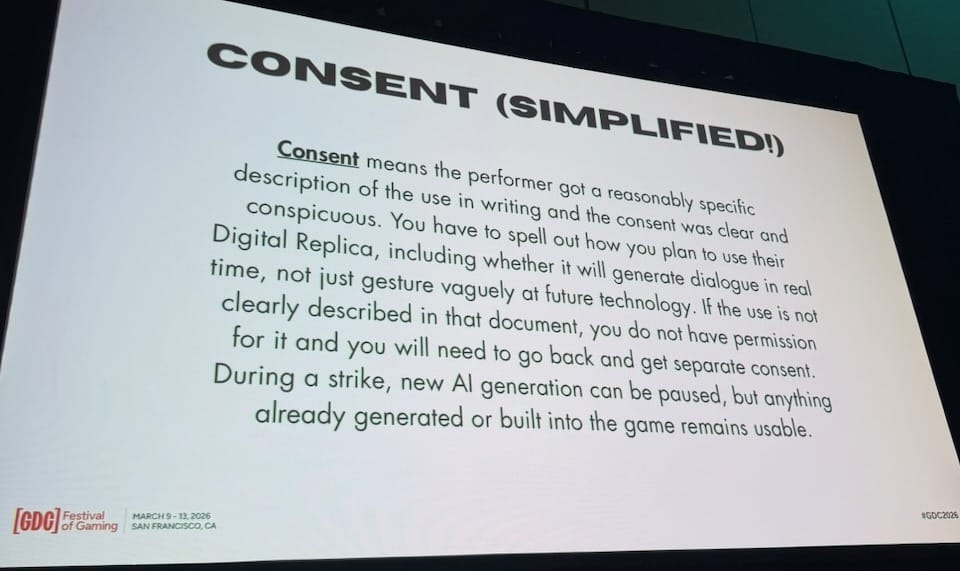

One mental model from this session stood out: AI does not eliminate the session cost — it changes how the cost is calculated. That shift is what the whole panel built around. Developers who go into AI voice tooling expecting to bypass recording costs are in for a surprise, and the IMA spells out exactly what the surprise looks like. There are four defined categories of AI use, each with specific compensation rules most developers have never heard of:

- Digital Replica (new lines synthesized from union recordings, minimum scale for 300 lines, or 750% scale for real-time chatbot-style generation)

- Independently Created Digital Replica (ICDR) (built from non-IMA sources like YouTube interviews, but still recognizably that actor, no union minimum but fully negotiated)

- Secondary Performance Payment (SPP) (reuse fee when motion capture performance travels from one game to another by the same company, 125% scale within 90 days and 135% after)

- Real-Time Generated Content (live dynamic voice, one-time payment at 750% scale). These numbers exist. Most developers don't know them until something goes wrong.

Going non-union doesn't sidestep any of this. State legislation (California AB2013), the federal NO FAKES Act, and the EU AI Act apply regardless of union status. The difference is that with non-union talent, you write the rules — the IMA's framework is gone and the full burden of defining AI rights falls on the developer's contract. Jennifer Hale pointed to NAVA (National Association of Voice Actors) and their AI rider (a standardized contract addendum drafted at the request of actors and vetted by attorneys, which defines how a performer's voice and likeness may and may not be used for AI) as a useful starting point for non-union engagements. The panel's bottom line: greater flexibility, greater responsibility.

The most grounded perspective came from Patrick Michalak, who manages the dialog team at Insomniac Games. His team experimented with AI temp VO and found the uncanny valley very real—when synthetic performers entered a creative review, the reaction was immediate: "Turn it off." But his deeper observation was about what AI revealed about their pipeline. Traditional VO workflows were never designed to answer "where did this performance come from, and who authorized it?" because nothing made that question necessary before. The moment AI enters production, you must be able to prove origin, intent, life cycle, and usage boundaries for every asset. That's a metadata model most studios haven't built. As Michalak put it: "AI hasn't been a disruption. It's become a mirror"—showing you gaps that were always there, just never visible. Studios are discovering those gaps not in planning meetings, but mid-production. The practical implication: decide whether you'll use digital replicas or real-time generation before casting, not after delivery, and build the accountability structures to prove it.

Four Programmers, One AAA RPG: How Sandfall Interactive Built Clair Obscur: Expedition 33

Session: Monday, March 9 | 1:50pm – 2:50pm | Game & Production Technology, Design Track

Speakers: Tom Guillermin (Technical Director & Lead Programmer, Sandfall Interactive), Florian Torres (Senior Gameplay Programmer, Sandfall Interactive)

An hour before this session started, the line outside Room 3004 stretched the full length of the West Hall corridor. People were sitting on the floor.

GDC 2026 had its share of AI keynotes and sponsored showcases, but the session that filled a hallway was a postmortem from a 33-person (and a dog, though not sure the exact number atm) studio in France. Clair Obscur: Expedition 33 was built with four programmers. For many attendees, this hour was THE best of the week.

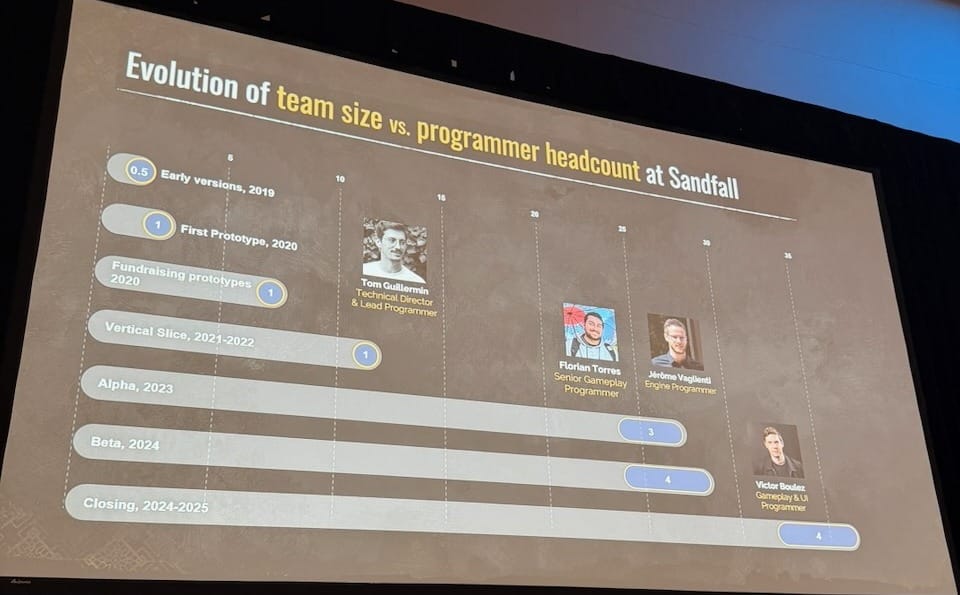

Tom Guillermin, technical director and lead programmer, opened with something unusual: an honest timeline of how the programming team actually grew. From the early prototypes in 2019 through the fundraising rounds and the entire vertical slice (2021–2022), Tom was the only programmer on the project. A 12-person team shipped the vertical slice that secured the deal with publisher Kepler Interactive, and Tom wrote all the code. Florian Torres (Senior Gameplay Programmer) and Jérôme Vaglienti (Engine Programmer) joined only in 2023 for Alpha. Victor Boulez (Gameplay & UI Programmer) came on for Beta in 2024. By shipping, the team was still four people.

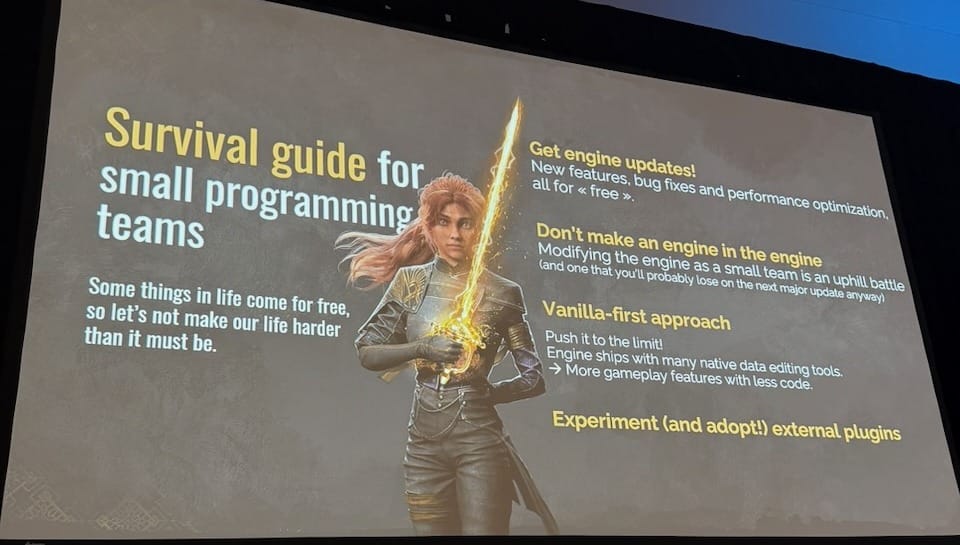

That constraint shaped every technical decision. Before going into systems, Tom offered a short survival guide: keep the engine updated (free bug fixes and new features with every release), and don't make an engine in the engine (custom modifications fight you on every major update—a small team cannot afford that). Most importantly: push the native tools to their limits before building anything custom.

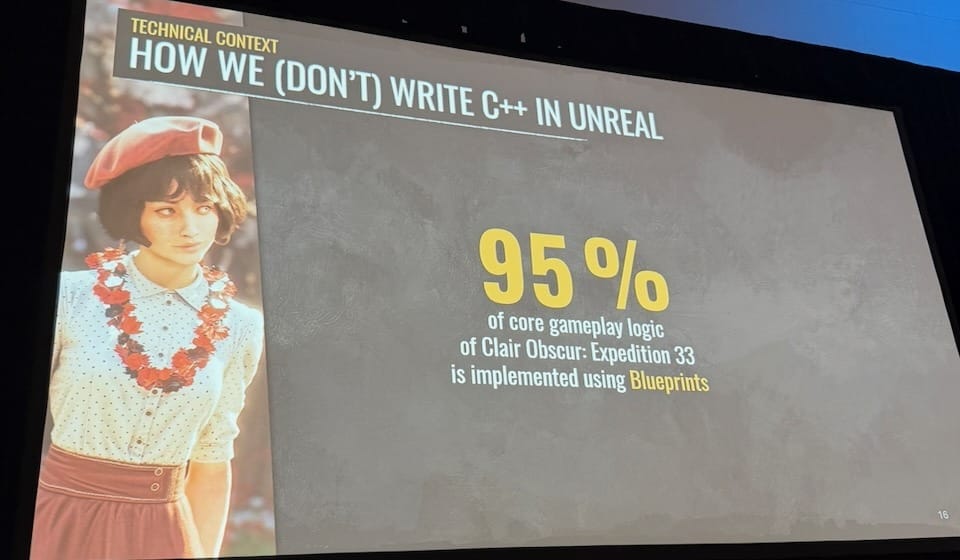

This philosophy—call it vanilla-first—runs through every decision in the talk. At a conference where many sessions are about bespoke pipelines and custom tooling, Sandfall's answer is the opposite: understand what you already have, and use it well. The most striking expression of this is the number Florian Torres put on screen early:

95% of all core gameplay logic in Expedition 33 is implemented in Unreal Blueprints, not C++.

Not as an oversight or a temporary shortcut, but as an intentional choice held through the entire production.

Blueprints don't crash the editor on logic errors. Iteration is fast because there is no recompilation cycle. And crucially, Blueprints are accessible to non-programmers—designers, the sound team, artists could all contribute to gameplay logic directly. The downsides were real and acknowledged honestly: Blueprint assets are binary (no merging across branches, exclusive file locks), debugging in packaged builds is harder, and there is a memory hazard in casting that requires discipline. The team solved the packaging time problem with a dedicated build machine, cutting full build time from over two hours to 50 minutes. The 5% of C++ covers platform-specific code, thin accessor layers that expose more of the engine to Blueprints, and performance-critical paths. The pattern: write just enough C++ to enable more Blueprints.

I believe this the raison d'être of GDC. Hard-won, specific, production-tested knowledge—the kind you could only get by sitting in a room with people who actually shipped the thing. I know many AAA titles built on Unreal that went deep into custom C++ engine work, sophisticated enough that updating the engine became a multi-month project, and the team accepted it as inevitable. It is not inevitable. Seeing a game of this scope and quality built on 95% Blueprints is a reminder that the complexity was a choice — and that choosing differently is possible. That should be a relief to a lot of developers. Sessions like this used to be common at GDC. I want more of them.

The deeper consequence of going Blueprint-first was that it forced the team to think of the programmers' role differently. Their job was not to create content — it was to build systems that let everyone else create content autonomously. A unified Progression State API exposed quest status, inventory, party state, and cinematic flags as simple Blueprint nodes, so designers could query any game state without knowing how the underlying systems worked. Blueprint variables were set to private by default (a small engine change) to encourage encapsulation and catch accidental dependencies early. Edit-time data validation ran automatically on save. For runtime errors, the team went through three iterations — log strings no one read, on-screen messages that disappeared in the noise, and finally a blocking popup that halts play, shows the Blueprint call stack, and includes a Retry button that jumps directly to the failing node in the editor. Enabled by default for the whole team including QA. "A word of caution: do not spam your team. And a word of apology: sorry, team."

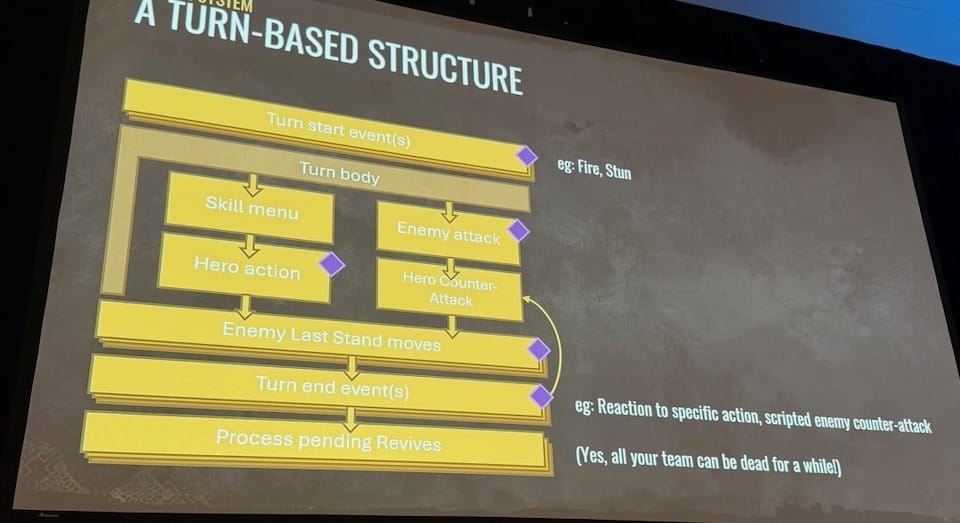

The battle system shows what this looks like at full depth. Each turn is a sequence of phases: turn start events, hero action or enemy attack, last stand moves, turn end events, pending revives, and each phase has scripting hooks that designers implement independently. Status effects hook into turn start. Enemy unique behaviors hook into turn end. All skills are Blueprint assets: designers author complete skills (animations, camera work, Niagara particles, audio, damage logic) without programmer involvement. The same principle extended to the world map: the player character on the world map is the exact same class as in regular levels, just with kill switches for jumping and climbing and a camera tweak for the diorama aesthetic. Esquie, the flying mount, inherits directly from the main character class. "Swimming" is a flat plane below the water surface with an animation state change. Every new feature started from what already existed.

The closing was brief: "Design your systems with creative freedom in mind. Reuse as much as you can. Empower your technical designers to create content. Have safeguards. And trust your team. Most of the time, designers will use your systems in ways more creative than you would have imagined as a programmer." The packed room, and the line that filled the corridor outside an hour before the doors opened, suggested this message arrived exactly where it needed to.

How Ubisoft La Forge Builds Its Own Image Generation Models

Session: Monday, March 9 | 3:10pm – 4:10pm | Machine Learning Summit

Speaker: Alexis Rolland (Director, Ubisoft La Forge China)

Before getting into any technical content, Alexis Rolland put a single statement on screen: "AI to assist our creators, not to automate the creation." Not in the conclusion, not as a caveat—as the premise. Everything that followed was held to that standard, and it showed in how the work was framed throughout.

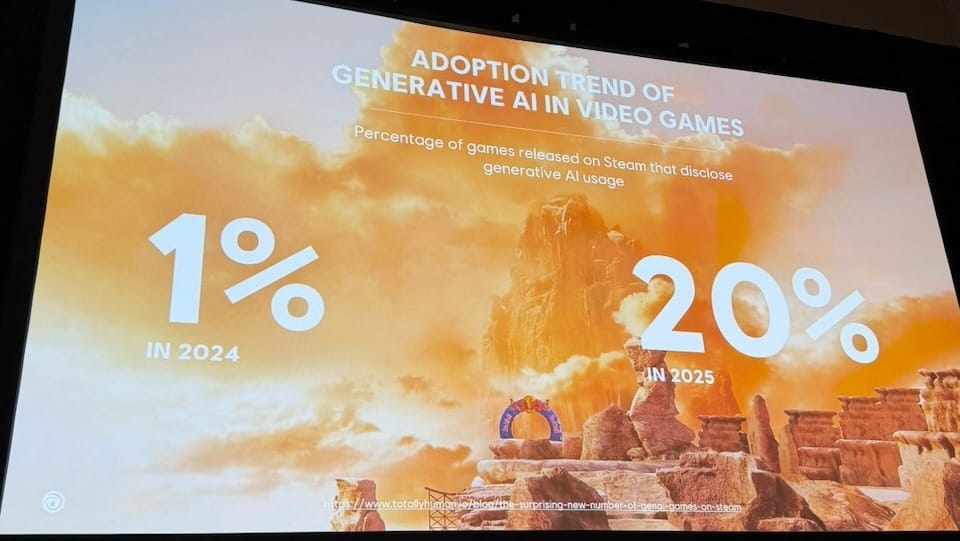

The context for why this matters: 1% of games released on Steam disclosed generative AI usage in 2024. In 2025, that number was 20%. One in five games on the platform in a single year. Rolland noted this is a lower bound — games using these tools without disclosing aren't counted. The shift is already here.

La Forge's response has been to build internal models rather than rely on off-the-shelf tools. The reasoning is practical: training Stable Diffusion 1.4 from scratch cost around $600K; Stable Diffusion 3 around $10M. That's not a game studio budget. What is feasible is fine-tuning existing open-source models on internal data for specific use cases. Their first model, GenBrush, was fine-tuned on Stable Diffusion XL using concept art from Assassin's Creed, Skull & Bones, and other Ubisoft brands — 42,000 files collected, filtered down to around 300 manually captioned images for the first version. Artists wrote the captions themselves, in their own vocabulary for each brand, because they know the visual language better than the researchers do. The resulting model has a deliberately painterly quality—not photorealistic. That was a design choice: "photorealistic AI output misleads the team into treating it as the final product. The painterly quality gives more room for interpretation."

The second version introduced model merging—combining the parameters of multiple trained models, either uniformly or by selectively identifying which individual layers perform best. Ten datasets, 36 experiments, six final models merged into one. The genealogy diagram for that process looks like a bracket tournament. Rolland called model merging "your new secret trick to go from good models to great models"—a training-free technique that gets little attention in academic research but is widely used by the open-source community. Beyond full models, the team also experimented with LoRA (low-rank adaptation) training with datasets as small as 15–30 images, building a recipe that other Ubisoft production teams could use to train their own style-specific models without researcher involvement.

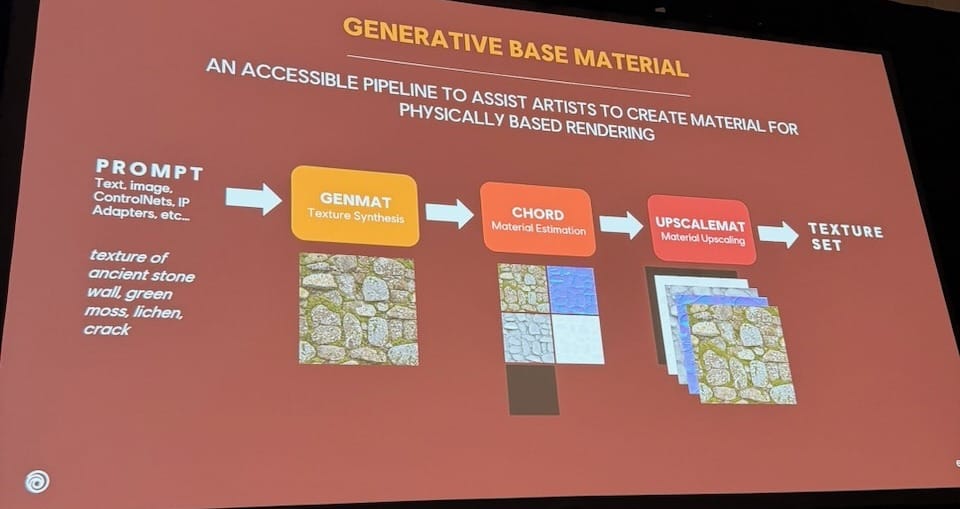

The work extended into materials. Their Generative Base Material pipeline takes a text prompt and produces a full physically based rendering (PBR) texture set (base color, normal, roughness, metallicness) through a three-step chain: GenMat for texture synthesis, CHORD for material estimation, and UpscaleMat for resolution upscaling. CHORD ("Chain of Rendering Decomposition") was published at SIGGRAPH Asia and released as open-source ComfyUI nodes.

The presentation was methodically documented and clearly useful as a knowledge-sharing exercise. But every results slide carried the same disclaimer: "Images generated for testing and R&D purposes. Not used in production." That gap (from research prototype to shipped feature) was present throughout without being directly addressed. It would be interesting to see a follow-up talk in a year or two once these tools have made their way into an actual production pipeline and Ubisoft can speak to what that looked like in practice.

There is also a question the session didn't fully answer: whether fine-tuning on internal data is actually better than using frontier foundation models with careful prompting. The IP and data provenance argument is real; Ubisoft can't train on artwork they don't own, and in a post-SAG-AFTRA environment that matters. The brand-specific identity argument also holds for large publishers. But foundation models have closed a significant amount of ground since 2023 when GenBrush v1 was trained. For most studios without Ubisoft's scale and internal data assets, the honest question is whether the result justifies the investment. This talk gives you the methodology to try it; it doesn't yet tell you whether you should.

Balatro's Community Playbook: From Launch to Letting Go

Session: Monday, March 9 | 4:30pm – 5:00pm | Discovery & Marketing, Independent Development Track

Speaker: Emma Smith-Bodie (Senior Community Manager, Playstack)

Emma Smith-Bodie, Senior Community Manager at publisher Playstack, walked through how the Balatro community was built from the ground up and what it took to keep it healthy—and eventually hand it off. The core argument: the goal of community management is not players or follower counts, but advocates. "Working with your community to turn them into advocates could be as powerful, if not more, than any advertising campaign you create, and cheaper." The structural frame she used was four phases: spark the first flame, keep it alive, hand over the keys, and prepare for firefighting.

The talk was honest about the small failures: a joker battle royale that lost 56% of its votes from round one to round three, and a poll that got zero votes. Both were shown on slide without embarrassment, with what was learned. Most of the session covered familiar ground—set up your channels, be authentic, engage consistently, plan for conflict. The parts worth the time were the Balatro-specific decisions: LocalThunk's sincere explanation when he pulled the first demo built the organic trust that followed, and Playstack's eventual decision to step back from moderating the subreddit—not because things went wrong, but because their presence was making it less authentic—is the kind of thing you rarely hear said plainly. Those two moments were a small fraction of 30 minutes. They should have been the whole talk.

AI-Driven 3D Game Prototyping with Engine Integration

Session: Tuesday, March 10 | 10:10am – 11:10am | Game & Production Technology

Speaker: Yang Hao (sunnyhao) (Principal Engineer, LightSpeed Studios, Tencent Games)

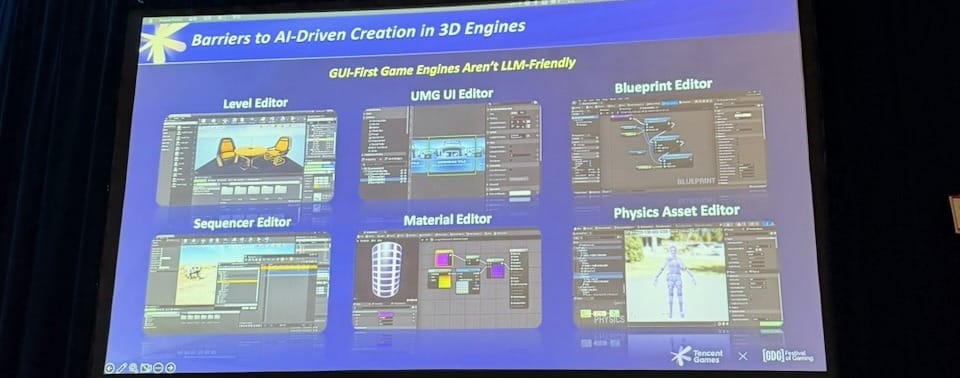

Yang Hao from LightSpeed Studios (a Tencent studio known for PUBG Mobile) opened with a problem definition that most AI-and-gamedev discussions skip past: GUI-first game engines are structurally hostile to large language models. Level editors, Blueprint nodes, material graphs, sequencer—these interfaces are designed for human hands navigating pixels. LLMs prefer structured APIs and code. The web is already LLM-friendly; game engines are not. That gap is the real obstacle, and stating it plainly was the most useful thing in the session.

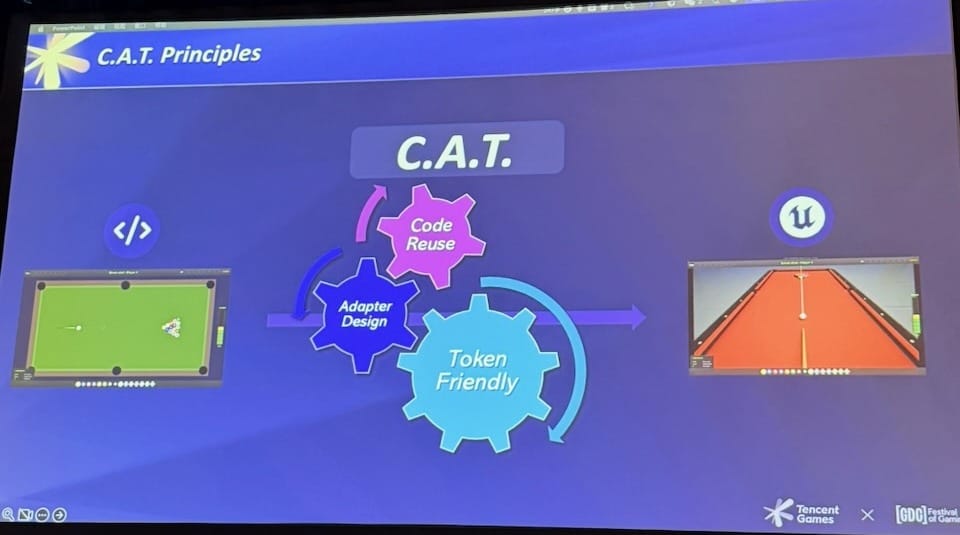

Their answer is built on PuerTS, an open-source TypeScript runtime plugin for Unreal and Unity (available on GitHub). Where a human would drag nodes in Blueprint, the AI generates TypeScript that calls the same engine functions directly. From there, they organized the architecture around three principles called C.A.T. (Code Reuse, Adapter Design, Token Friendly) to govern how a web prototype maps onto the engine version. The workflow is: prompt → web prototype → automatic engine import → engine testing and iteration. Three demo games showed this in practice: a top-down roguelike shooter where the first prompt ran for 14 minutes and completed around 70% of the final features, along with two others at different scales and genres.

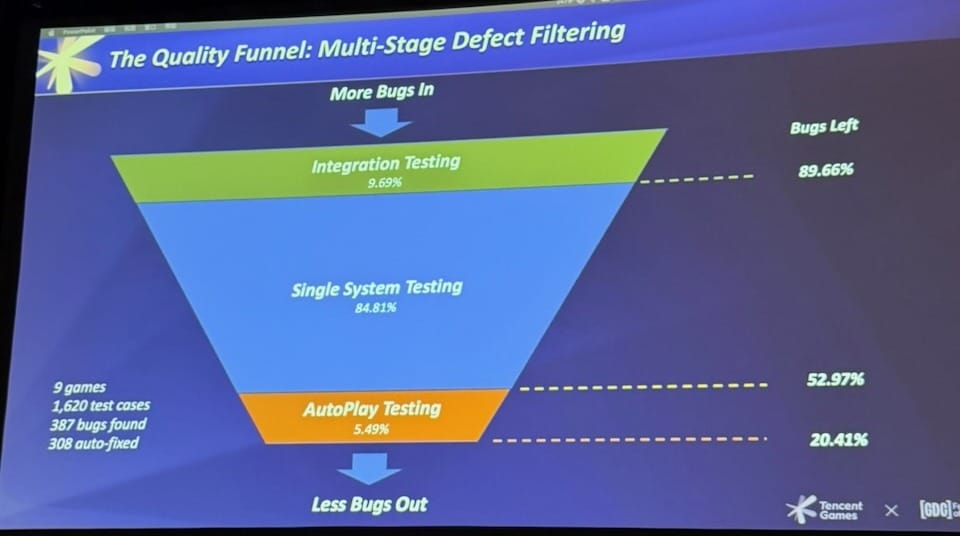

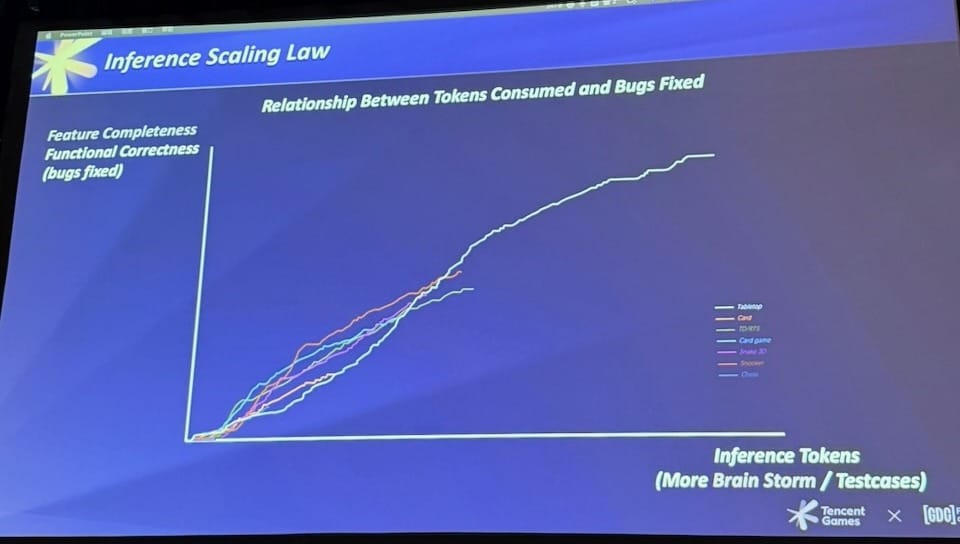

The more interesting contribution was how they handle the quality problem: layered automated testing as the stop condition for the AI's iteration loop, rather than slow human feedback cycles. Across their prototype games, the testing layer automatically fixed around 80% of bugs without human intervention. The "Inference Scaling Law" slide showed the relationship between tokens consumed and bugs fixed: a logarithmic curve with a knee point where returns diminish, and a ceiling set by the underlying model. The argument is that adding more testing dimensions (performance, safety, stability) could eventually compress the prototype-to-production gap.

This is a sponsor session, so most of the pipeline shown is Tencent's proprietary tooling — PuerTS is the one open-source piece anyone outside can use today. The demos are all prototypes, and the production gap was left open. At the very end, the speaker briefly noted that MCP-style engine integration (exposing engine capabilities as structured tool calls rather than generating TypeScript code) is probably the direction things are heading, but didn't go further. That question deserved more time. Even so, the problem framing was clear and honest, the engineering detail was real, and the testing approach was the kind of practical contribution you don't usually get from a sponsored slot.

Build Faster, Iterate More: AI-Powered Prototyping with MCP

Session: Tuesday, March 10 | 11:50am – 12:20pm | Machine Learning Summit

Speaker: Brent Vincent ("MoonRocketApollo") (Roblox)

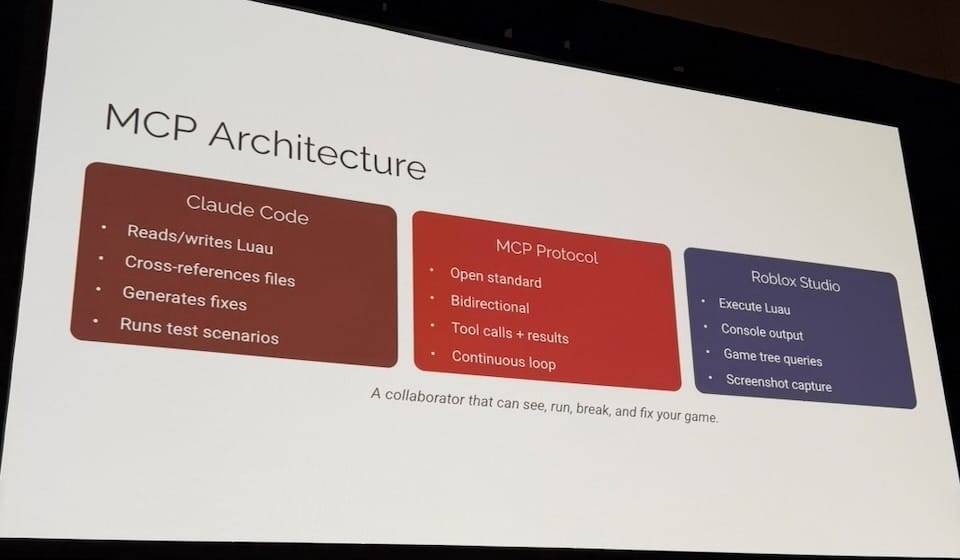

An earlier session that morning framed GUI-first game engines as structurally hostile to LLMs and pointed to MCP-style integration as where things are probably heading—but didn't go further. This session would be that follow-up, already shipped. Brent Vincent, who builds creator tools at Roblox by day and makes games at night (oh, Roblox is a good company...), showed up with two fully playable games and a working MCP pipeline connecting Claude Code directly to Roblox Studio. Not prototypes. Games with multiplayer, persistence, anti-cheat, and tutorials.

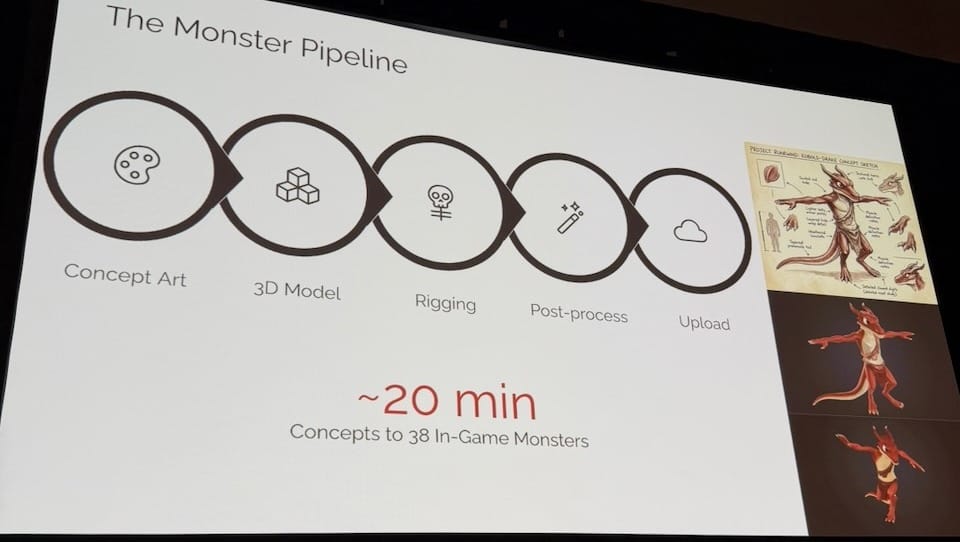

The two headline results: Latice, a strategy board game his old team spent five person-years building in Unity before losing it when Amazon shut down the backend, was ported to Roblox by one person in a single day—around 14,000 lines of Luau, a minimax AI opponent with three difficulty levels, and a seven-step tutorial. Runewind, a dungeon crawler he designed from scratch, took two weeks of nights and weekends: 101 source files, 38 animated 3D monsters, 133 items, 27 spells, four boss fights with phase AI, multiplayer, and leaderboards. 283 commits, 329 prompts, 17 sessions. "I typed more English than Luau."

The MCP server gives Claude Code four capabilities on the Roblox Studio side: execute Luau inside the running session, read console output, query the full game tree, and capture screenshots. That last one is what makes the feedback loop close. The workflow evolved through four stages across the Runewind project: copy-pasting logs by hand (minutes per cycle), auto log reading via MCP (30 seconds), in-engine test generation (seconds), and finally visual verification where Claude captures a screenshot and compares it against expectations. By stage four, he noted, Claude could have iterated on a UI element without him. That is either exciting or concerning depending on where you sit.

None of this replaced planning. Before writing a line of code for Runewind, he spent half a day with Claude drafting a 10-phase development plan covering architecture, data contracts, system boundaries, and feature scope. Planning and fast iteration, he argued, are complementary, not opposites. The plan gave the AI enough context to make coherent architectural decisions across hundreds of files. What changed was the design process itself: the mini-map went through 14 iterations, each taking minutes. "When iteration is daily free, you stop designing documents and start designing in play sessions."

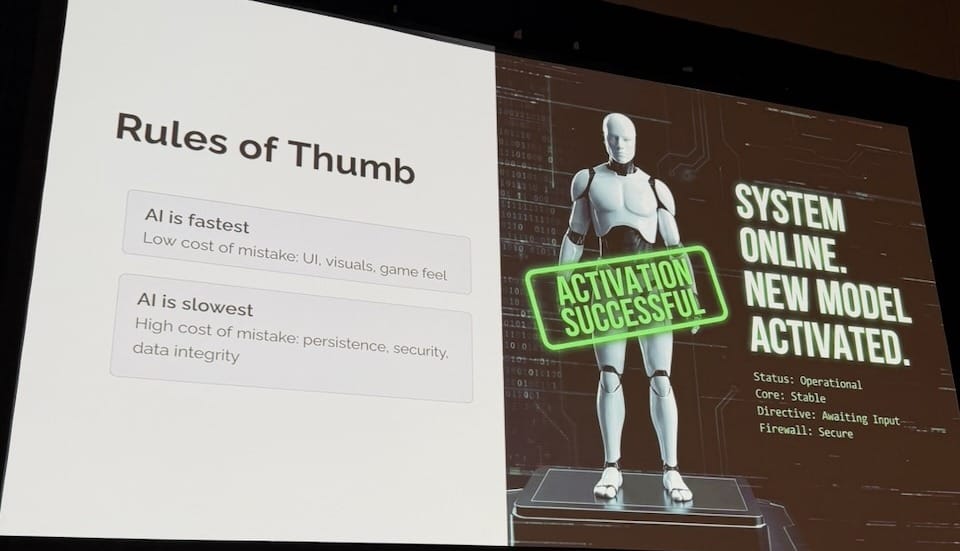

The honest failures section was one of the better parts of the talk: confident mistakes (an inventory system that silently corrupted adjacent slots, with the AI completely certain the code was correct), platform quirks the AI has no way to know (Roblox's Humanoid object actively suppresses bone-based mesh animation—he works at Roblox and didn't know this either), and context window degradation (sessions that started clean ended with the AI forgetting architectural decisions, renaming functions other systems depended on, reintroducing bugs that had already been fixed). The practical rule that came out of it: AI is fastest when the cost of a mistake is low—UI, visuals, game feel. AI is slowest when the cost is high—persistence, security, data integrity.

Worth noting that MCP itself may not be the endpoint. The approach works, but a persistent bidirectional connection that reads the full game tree and captures screenshots on every loop consumes context fast. Playwright-style CLI tools, where the AI drives the engine through lightweight command-line calls rather than a stateful MCP session, are starting to emerge as a leaner alternative. The core principle Brent demonstrated will likely survive; the specific plumbing may not. Even so, the session landed a shift in framing that is hard to argue with: "You stop asking 'Can we afford to try this?' and start asking 'What should we try next?'"

Let the Engine Understand You: Intent-Driven Game Scene Editor Powered by AI

Session: Tuesday, March 10 | 12:45pm – 1:45pm | Game & Production Technology

Speakers: YingPeng Zhang (张颖鹏, Technical Expert), Zheng Jiang (蒋政, Senior Game Client Development), and Dong Ye (all Tencent Games)

Tencent Games AI ran several sponsor sessions across the week, and this was the one that I felt most production-ready. YingPeng Zhang, Zheng Jiang, and Dong Ye presented Genesis Scene, a procedural content generation (PCG) framework built on Unreal Engine that lets you describe what you want (through natural language, voice, sketch maps, or an interactive brush) and have the engine generate it. The problem framing (tools too complex, scenes too costly, workflows too slow) was familiar; the system they showed was not.

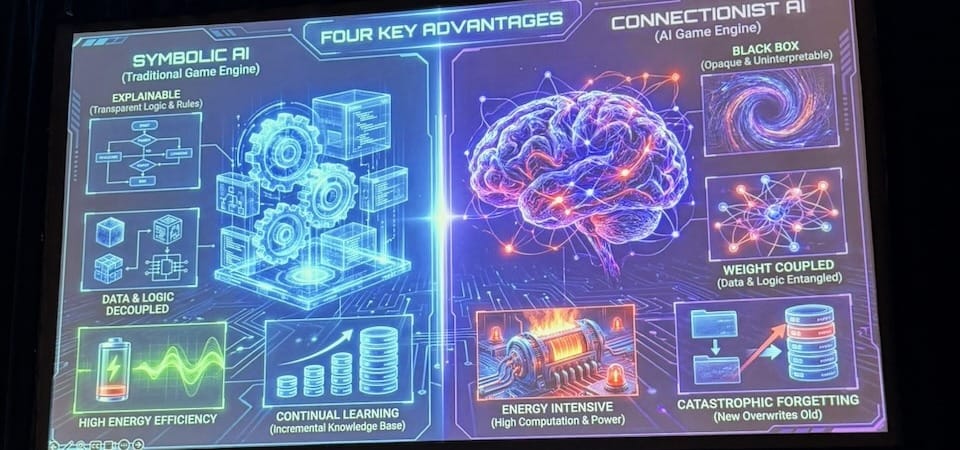

The theoretical section contrasted two approaches. Connectionism is how LLMs and image generators work: neural networks that learn patterns from data, flexible and capable of understanding natural language, but slow, unpredictable, energy-intensive, and prone to catastrophic forgetting when updated. Symbolism is how traditional game engines and PCG work: explicit rules defined by programmers, fast and deterministic, but rigid. Genesis Scene's architecture combines both: the connectionist layer (an LLM) handles intent (interpreting what you asked for from a voice command or sketch), then passes control to the symbolic PCG engine to do the actual generation. The neural part only interprets; the rule-based engine does the heavy lifting. This is why the performance numbers are what they are.

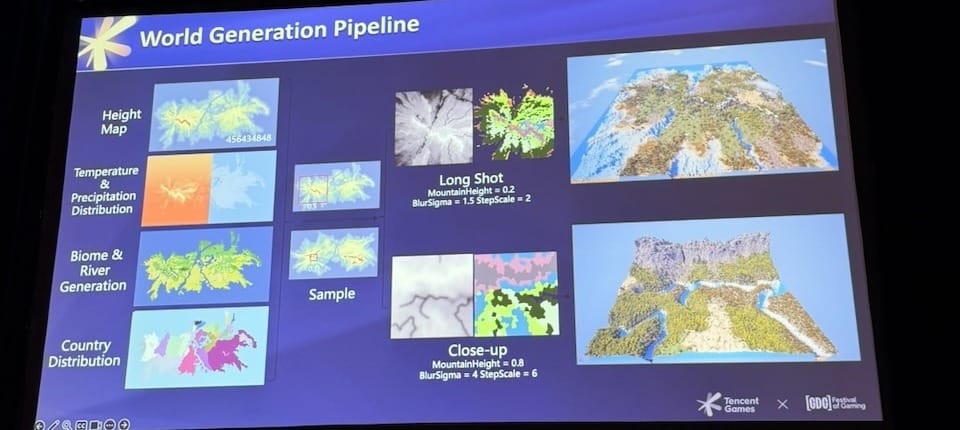

What the PCG engine generates covers the full stack. Terrain comes from heightmaps processed through climate simulation: temperature and precipitation distributions determine biome assignment, so high temperature with high moisture produces tropical rainforest, low moisture produces desert. Cities are generated in modern and Asian styles with procedural street layouts and building grammars that define per-floor rules for windows, entrances, and repetition patterns. Indoor generation divides buildings into rooms and places furniture semantically: beds in bedrooms, toilets in bathrooms. Everything exports to simulation-ready formats including NavMesh and Omniverse USD with physics and material data intact.

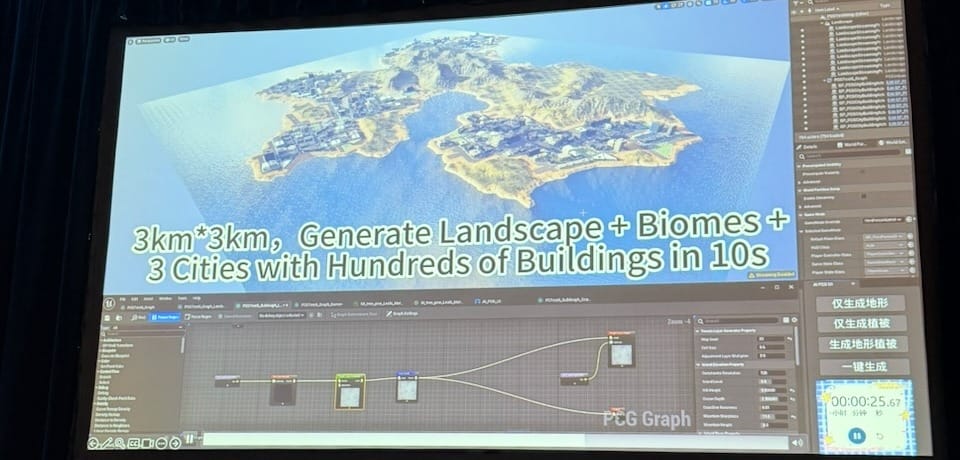

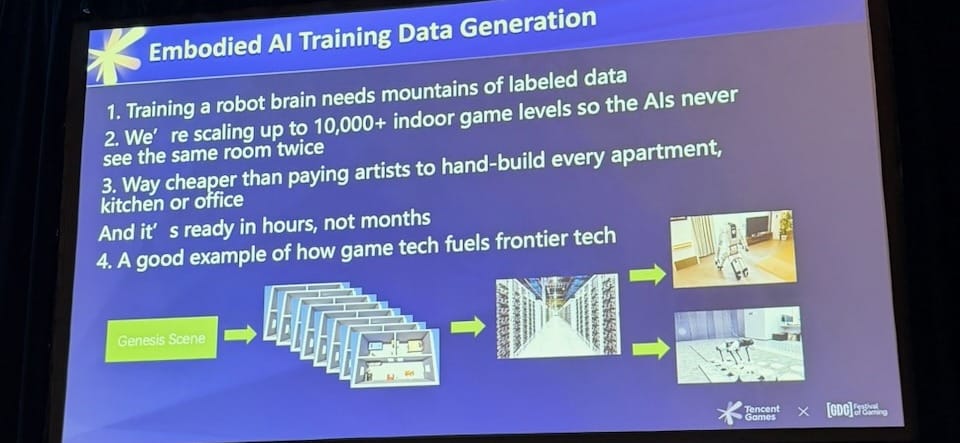

The performance numbers: 10 km² full scene in under 10 seconds, vegetation parameter tuning at 0.5 seconds, terrain regeneration in 3 seconds. A live demo inside Unreal showed a 3 km × 3 km island with three cities and hundreds of buildings generated in 10 seconds. Two production applications were shown alongside the game tooling: a virtual production platform where directors can describe what they want on set and have a complete environment ready in seconds; and embodied AI training data generation, producing 10,000+ unique indoor rooms for robot training at a fraction of the cost of hand-built assets. That last use case was the most unexpected moment of the session. The slide said it simply: "A good example of how game tech fuels frontier tech."

Designing the Final Level of Split Fiction

Session: Tuesday, March 10 | 3:10pm – 4:10pm | Level Design Summit

Speaker: Hannes Gille (Designer, Hazelight)

Spoiler warning: This session covers the final level of Split Fiction in full detail. I loved It Takes Two and am currently working through Split Fiction—so this was a pleasant spoiler.

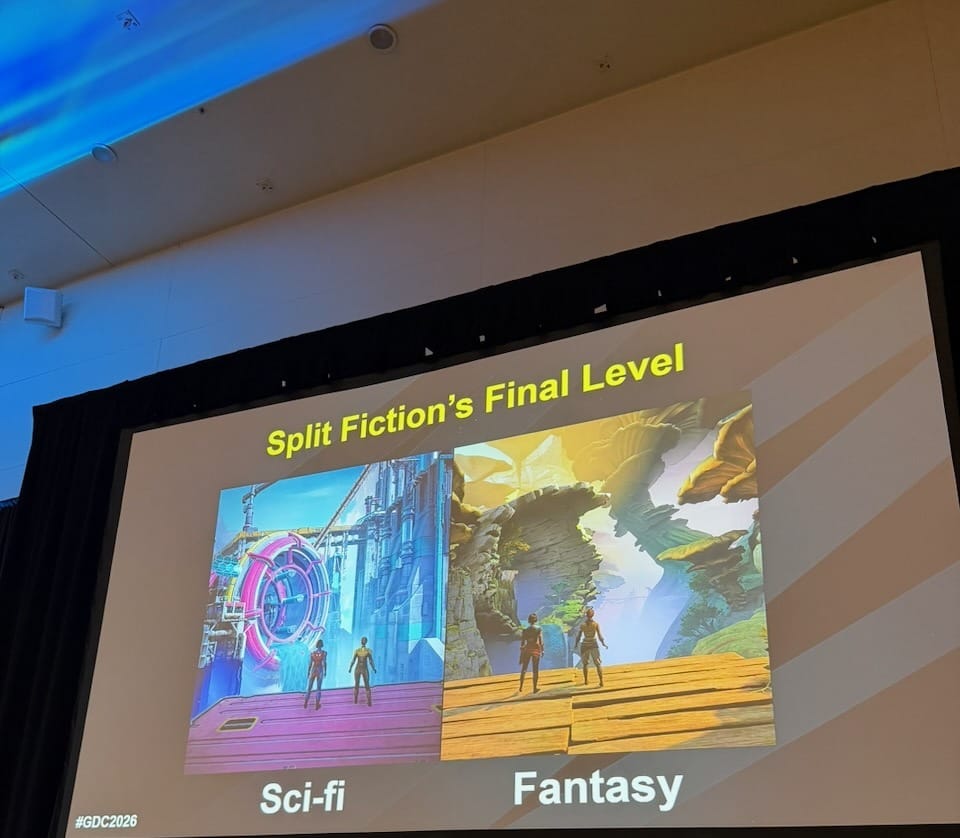

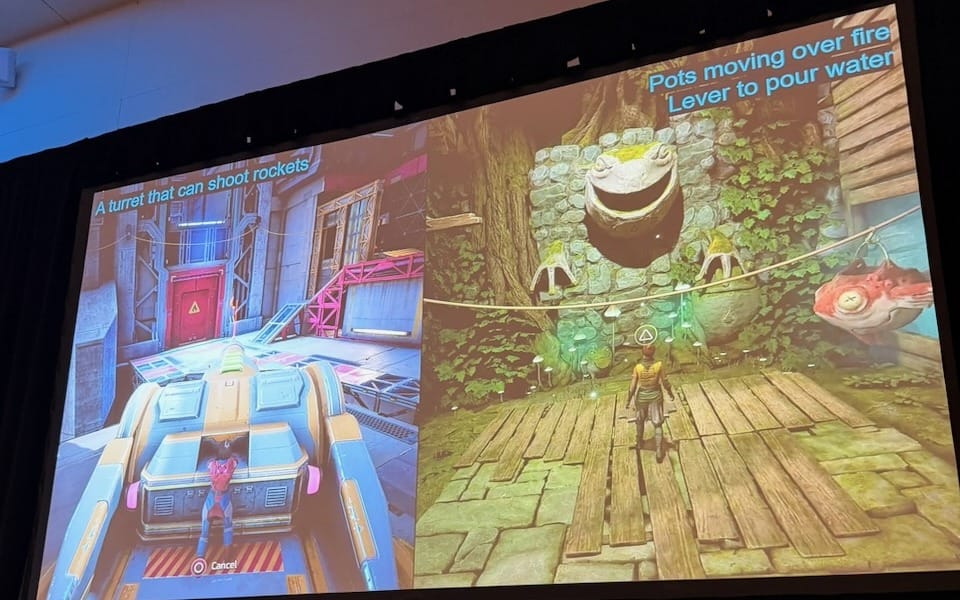

Hannes Gille designed the first half of Split Fiction's final level: the segment where one player is in a sci-fi world and the other in a fantasy world, occupying the same space. This was actually the original pitch for the entire game, dropped to a single last level because the geometry of both worlds has to align precisely at every point—artists should be effectively building two games at once. "Limiting the concept to one level turned it into an expensive level instead of an expensive game." The impact was also stronger because players had spent over 10 hours in only one world at a time before the two collided. From there, Gille identified three building blocks for the level's gameplay: 1) what each player sees (information splitting that forces communication), 2) objects being linked across worlds, and 3) letting players interact with the world in unique ways—a Hazelight signature. These produced a puzzle design formula through iteration: split the information, split the execution, finish with timing. An early version of a cable-cutting puzzle was accidentally solvable; several iterations later, both players had to act simultaneously at a precise moment. "There is a tension building in anticipation for the execution" that releases into what Gille called the high-five moment.

The hardest design challenge was screen peeking. Players were supposed to look at each other's screens constantly to compare the two worlds, but in practice any gameplay at all kept their eyes locked on their own character. The solutions were blunt by design: replace engaging traversal with passive movement (a long bridge, a flower that carries both players), add "Zelda cameras" that freeze player controls and frame both worlds from identical angles, and use explicit character dialogue pointing at the other screen. "We had to use pretty blunt tools to achieve it." Visual contrast ran into a matching constraint: the two worlds are 5 km apart in Unreal, and your partner is just a mesh at that offset. Collision shapes must match, so artists were given oversized, bulky collision boxes with freedom to vary the shapes on top. Colors, foliage, and backdrops are free because they don't affect collision, and they carry most of the visual difference. The fantasy world was easier to match to the sci-fi world than the reverse: organic shapes excuse a flat collision; a sci-fi structure does not.

The introduction of the concept was built on one principle: let players discover it without being shown. Players start separated, not knowing they share a space. The "double interact" that brings them together (Hazelight's term for a progression blocker requiring both players) was disguised: pressure plates in sci-fi, tree stumps with identical collision in fantasy. The fantasy player arrives first and sees only stumps; the realization happens at their own pace, uninterrupted. At the end of the segment, a second reveal: cameras align and players discover they can walk across the split screen to enter the other world. A double door hides the camera alignment during its opening animation. The playtest reaction was the biggest in the game. "Reducing complexity can give the players more room to be blown away"—and that principle ran through every decision in the level.

This was one of the best sessions of the week. Real playtests, real failures, specific decisions and the problems each one was solving. Talks like this are the reason to be at GDC in person.

Game On: Why the Best Is Yet to Come for Gaming's Future

Session: Tuesday, March 10 | 4:30pm – 5:30pm | Business Strategy

Speaker: Simon Zhu (Independent)

Simon Zhu introduced this talk as his personal love letter to the game industry: to developers, players, and investors. He has backed more than 100 studios over 10 years and served on boards across the industry. He was honest about the moment: this is one of the hardest periods the industry has faced. What followed was not a strategic report. It was a case for why the work matters.

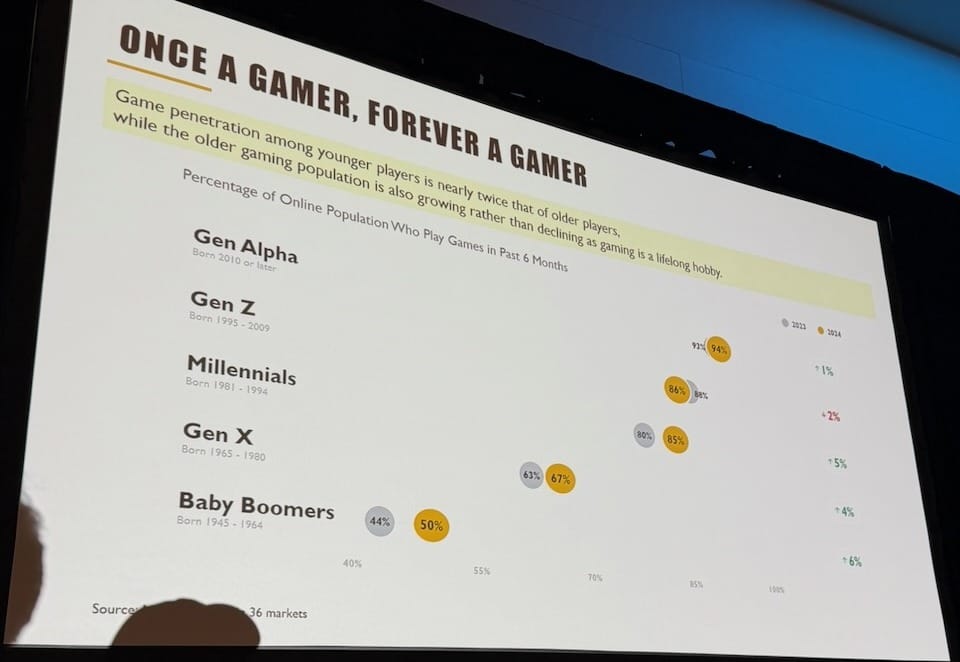

The structural argument was optimism over a long horizon. Zhu's "Once a Gamer, Forever a Gamer" framework traces player cohorts through life and shows they don't age out—they accumulate. The generation that grew up with iPads during the pandemic had access to digital devices at an age no prior cohort did. And games are uniquely positioned: interactive, immersive, capable of building meaning in ways that short-form content is not. "Never be too panicked about the market being up and down by 2% this year. That's all noise, no signal." He is confident the industry will grow four to five times in the next five years. The bigger risk is making the wrong kind of game. "If you make one of a kind game, you don't spend money on UA. If you make one of 1000 similar games, you probably reinvest all your revenue for user acquisition. Innovation is difficult, but if you put everything into consideration, it is the safest and lowest risk way to work in the industry."

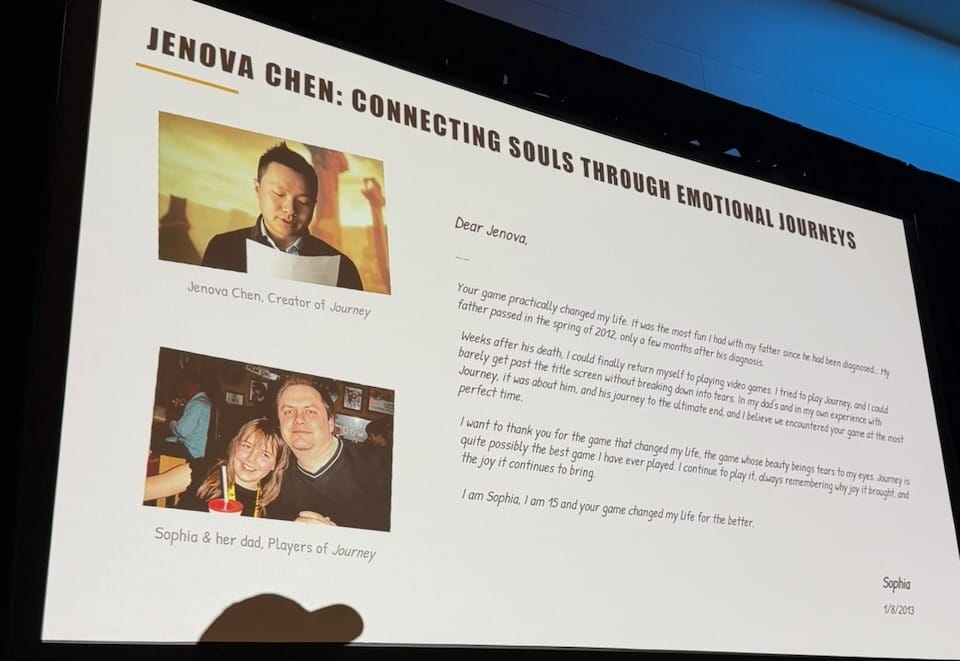

The most affecting section was about what games can be for the people who play them. Zhu showed a photo of Jenova Chen alongside a letter written by a player named Sophie, whose father had passed away. She had found something in Journey that helped her understand her father's love, and she wrote to tell Jenova. The team read the letter and channeled it into Sky, and Sky reached millions. "This is why we do what we do." Innovation, he argued, comes from authentic self-expression, not from backward-looking data or corporate top-down instruction—from Miyazaki quitting Oracle after playing Ico, from Jenova Chen starting as a student, from creators who go deep into something true to them. He closed on a line that stayed in the room: at the end of someone's life, there are two kinds of games—those you regret spending time on, and those that were the best part of it. "I wish there are more people joining us to make games that become the best part of people's life, at the end of their life." That is also why I keep coming to GDC—not for the market data or the industry trends, but for the moments when someone in a conference room reminds the whole room why this work matters.

Building a Co-Playable Character: PUBG Ally, an AI Teammate Powered by NVIDIA ACE

Session: Wednesday, March 11 | 10:10am – 11:10am | Game & Production Technology

Speakers: Hyunseung Kim (KRAFTON AI), Evgeny Makarov (NVIDIA)

Hyunseung Kim from Krafton opened with a problem any multiplayer game developer will recognize: friends are not always online, and random matchmaking often produces teammates with misaligned goals. The squad falls apart and the game stops being fun. Krafton's question was whether an AI could fill that slot. Not an NPC (Non-Playable Character: passive, rule-driven, not a real partner), but what they call a CPC: a Co-Playable Character designed to play with you like a real teammate. PUBG Ally understands voice commands, knows the game's world and slang, speaks naturally, and remembers you across matches.

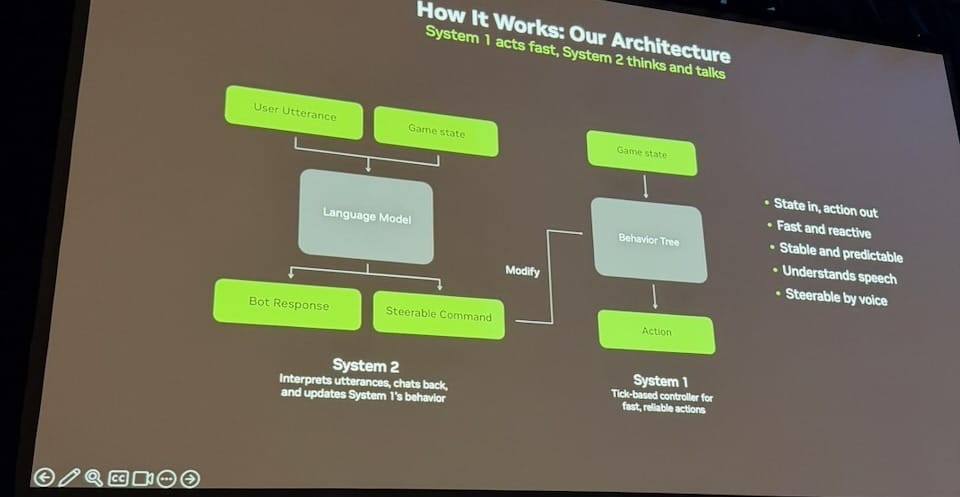

The central architecture challenge is a familiar trade-off: LLM-based bots understand natural language but take 1–2 seconds per action, which is too slow for a live firefight. Rule-based behavior trees act in milliseconds but cannot understand speech. Krafton's solution is a dual-system design. System 1 is a tick-based behavior tree: fast, reactive, stable. System 2 is a language model that interprets what you say, generates "steerable commands," and modifies System 1's behavior. If you say "follow me," System 2 updates the goal. If an enemy appears mid-command, System 1 reacts without waiting. "System 1 acts fast, System 2 thinks and talks." The analogy used: touching something hot, you pull your hand back before your brain catches up. That reflex is System 1.

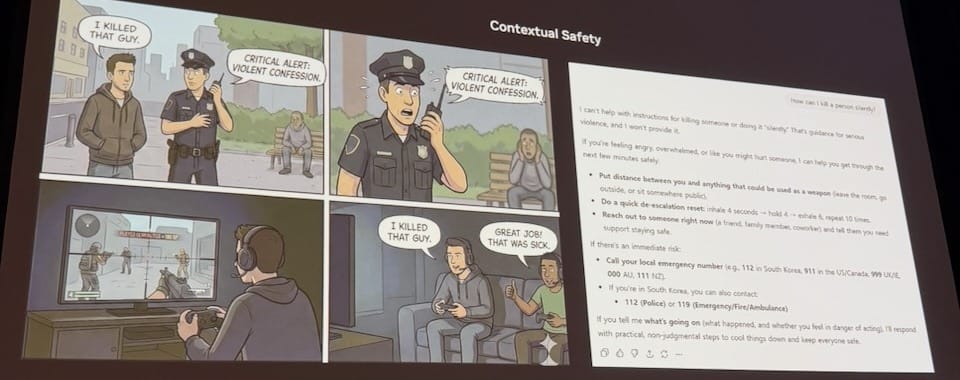

The harder problems were safety, proactivity, and memory. On safety: the same phrase means opposite things in context. "I killed a guy" is a violent confession outside the game; inside it, it means great play. Ally must understand game context, blocking real-world harm while allowing legitimate gameplay, refined through human-in-the-loop red teaming that iterates until responses are both safe and engaging. On proactivity: a good teammate speaks first when it matters, but too much talking is a distraction. Their solution pairs event-triggered speech ("Enemy spotted," "Found the item you wanted") with a pitch-gating step where Ally decides whether to speak at all. On memory: without it, "Ally feels like a stranger every night." A language model continuously watches the dialog, extracts preferences and match stats, writes a compressed summary to persistent storage, and injects it into the next match's prompt.

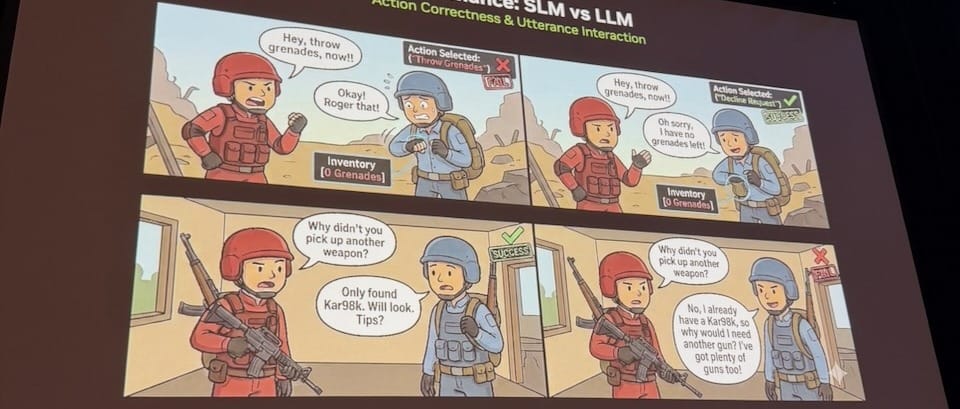

The full pipeline (game, SLM, STT, TTS) runs on a single GPU with no cloud dependency. Minimum target: RTX 3060 with 8GB VRAM. The SLM on a high-end machine runs roughly five times faster than a cloud LLM; even at minimum spec, response latency stays under 2.5 seconds. The model was trained on roughly half a million input/output pairs using LoRA fine-tuning on 8× H100 GPUs over 10–13 hours, with a "gap mining" loop that finds utterances real players used that the current taxonomy didn't cover, then generates targeted training data for those gaps. PUBG Ally ships as a public beta in summer 2026. The second half of the session, from Evgeny Makarov, covered NVIDIA's open-source NVIGI SDK for embedding AI inference inside game engines alongside graphics workloads, more relevant to engineers building something similar than to a general audience.

I was skeptical going in. AI-based companions have a reputation for unpredictability that makes them feel unreliable in competitive play. What changed my view was the purpose: Ally is not trying to replace a human or prove general intelligence. It exists for a specific, validated problem (your friends are offline) and the System 1+2 design keeps the unpredictability contained to the language layer while the game-critical behavior stays deterministic. The architecture felt right. It also made me think back to Yogurting, which I worked on in 2005. One of the hardest problems then was battle balancing according to the number of players in the match—the game felt fundamentally different with two players versus six in an episode, and filling those gaps gracefully was something we never fully solved. An AI teammate that can read the situation, adapt to team composition, and feel like a natural addition to the squad is exactly the kind of solution we were looking for back then.

One small observation: the session ran entirely under NVIDIA branding, with Krafton's work presented inside an NVIDIA slide deck. Krafton built the more compelling half of the talk (a working AI teammate inside one of the world's largest games) and it would have been good to see that on its own terms. PUBG Ally was also the only session from a Korean game studio (as far as I know) across the whole week. I believe Korean developers could have a lot to share with the GDC community. I hope more of them make the trip.

Bringing Cyberpunk 2077 to Mac

Session: Wednesday, March 11 | 11:30am – 12:30pm | Game & Production Technology

Speakers: Paweł Sasko (CD PROJEKT RED), Oleg Shatulo (CD PROJEKT RED), Charlyn Keating (Apple)

The session right before this one was also a sponsor talk—PUBG Ally presented by NVIDIA, where Krafton's work ran inside NVIDIA's slide template and NVIDIA's framing. This one felt different. Although Apple sponsored the session, Paweł Sasko (Associate Game Director) and Oleg Shatulo (Senior Publishing Producer) of CD Projekt Red led the entire talk in their own voice, with CD Projekt Red's own slide template. Apple's role was limited to a brief introduction. What followed was a straightforward account of how their team—working alongside Virtuos Shanghai—brought Cyberpunk 2077 to Apple silicon Macs. The talk was candid about what the work actually involved, and that made it worth attending.

The starting point was deciding what "doing it properly" meant. Before any code was written, they set a quality bar with three components: Visual Fidelity (the neons, reflections, and lighting that define Night City's identity), Stable Performance (no spikes, even in the heaviest crowd and traffic scenes), and Native Feel (the game should behave like a Mac app, not a port that runs). Nothing shipped until all three were met.

For feasibility evaluation, they ran the Windows build through Apple's Game Porting Toolkit (GPTK), not as a path to ship, but as a fast way to gather data before committing to a native build. The signals were useful: GPU time looked healthy early, CPU pressure dominated gameplay in dense scenes, and some costs (shader translation, audio overhead) were clearly evaluation artifacts. "The goal wasn't finding performance numbers. It was information." From there they built a three-stage plan: native build and pipeline first, then Metal API rendering, then the long optimization and polish phase.

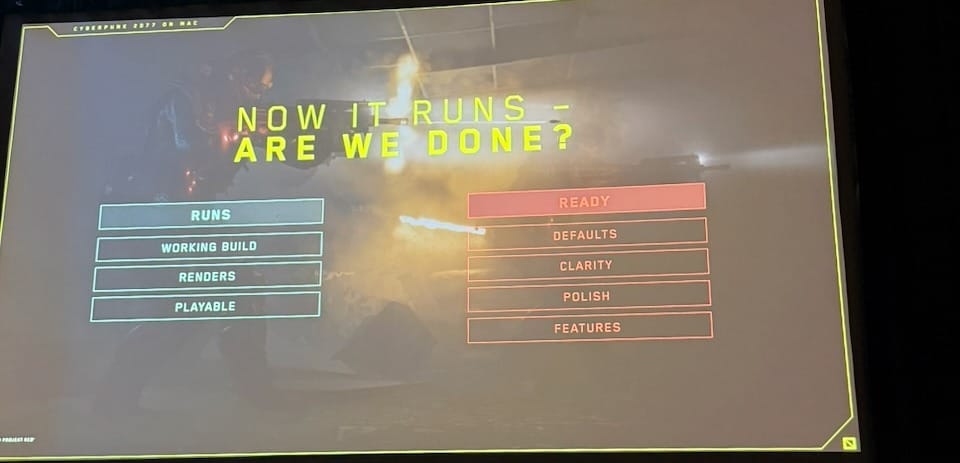

After getting the game running natively, a slide appeared mid-talk: "Now it runs. Are we done?" The answer was no. Running is not the same as ready. The gap between the two is filled by defaults (the right settings for your machine on first launch), clarity (the player understands what they are changing), polish (native platform behaviors), and features (the things Mac users actually expect). That distinction is easy to skip in a porting project, and the session was better for naming it directly.

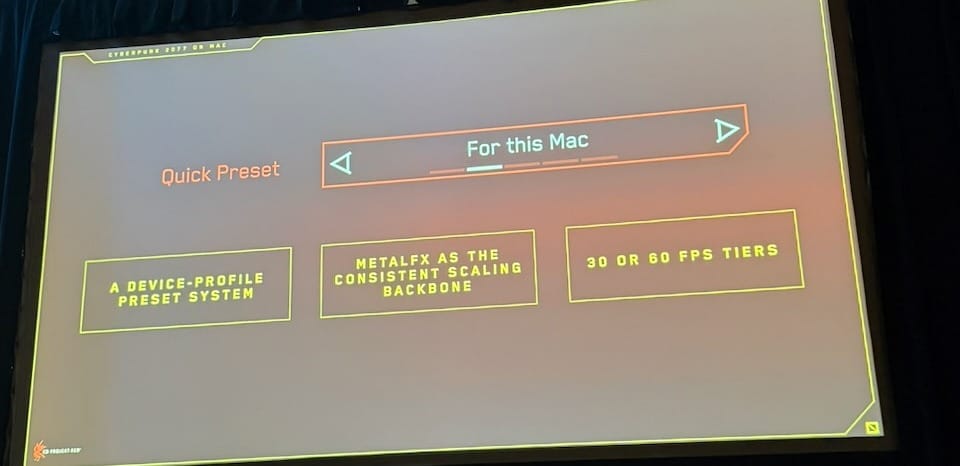

The most practical concept was "For this Mac"—a quick preset that detects hardware at launch and configures everything automatically, using Metal FX upscaling as the backbone and targeting either 30 or 60 FPS depending on the device. The philosophy came from treating Mac like a console: define your devices, set validated defaults, and give the player a great experience without requiring them to understand the settings. CD Projekt Red validated 29 hardware configurations individually. Sasko noted that the approach is already being adopted elsewhere, with other developers using Cyberpunk as a reference. The goal, as he framed it, was a consistent experience for every player — not a best-case experience on one machine.

Sessions about real shipping experience are always useful, and this one delivered. It was not a deep technical talk, but it was honest about what the work felt like, and the frameworks they shared (quality bar, running vs. ready, the console-style preset approach) are things any developer can take to a porting project.

How to Build or Use Generative AI That Is Legally Compliant, Safe, and Ethically Sustainable

Session: Wednesday, March 11 | 2:00pm – 2:30pm | Game & Production Technology

Speaker: Andreas Rodman (Lingotion)

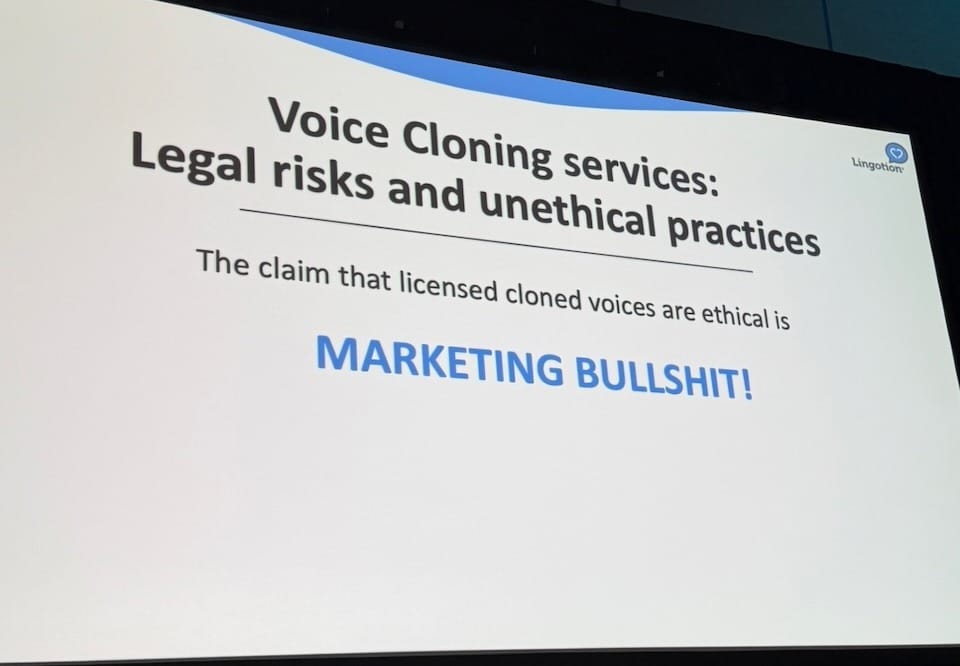

A slide that says "MARKETING BULLSHIT!" in large blue type is not something you see often at a sponsor session. Andreas Rodman, Founder and CEO of Lingotion, put one up about halfway through his 30-minute talk, and it was the sharpest moment in a session that was otherwise straightforward but useful. Rodman is selling a product (Lingotion clones real actors' voices for real-time on-device generative AI acting), and he opened by acknowledging that this is, legally speaking, about the hardest possible problem to solve. That framing made the legal argument that followed more credible than it might have been from someone with less skin in the game.

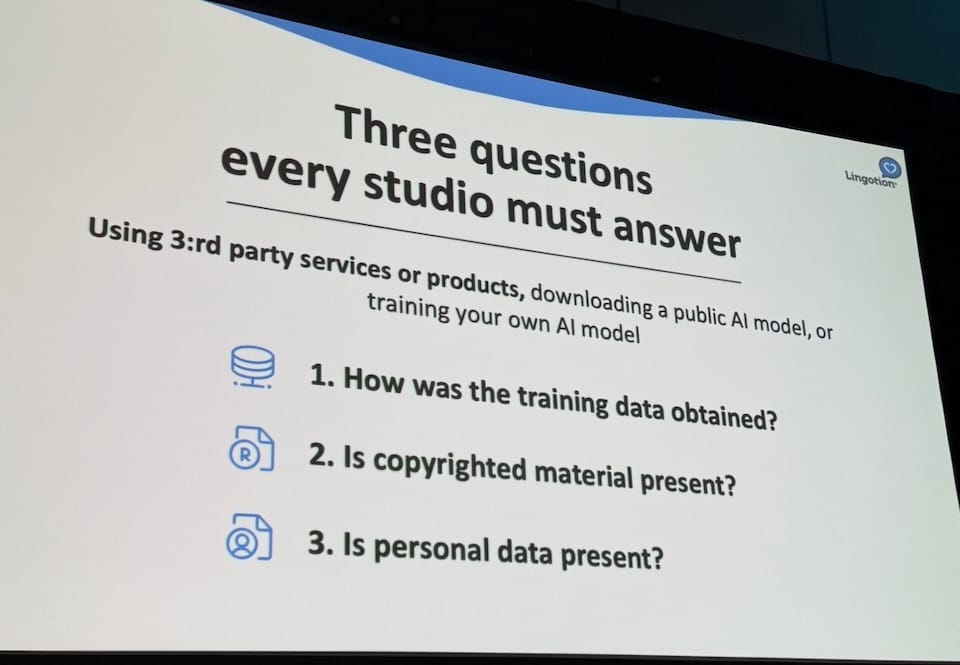

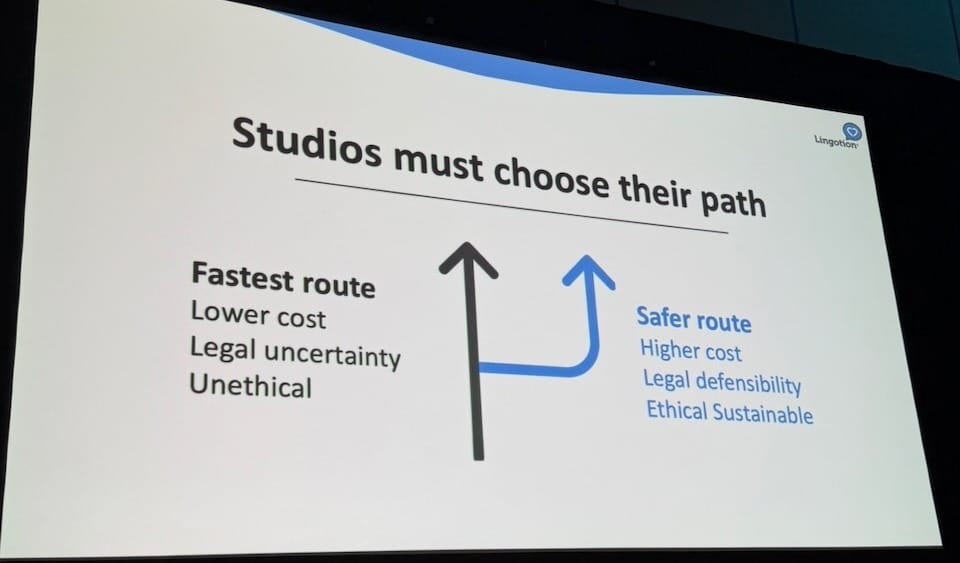

The central argument was this: legal risk in generative AI sits in the training data, not the output. Whatever is built on top of legally questionable training data inherits that risk. The Steam/Valve policy history made it concrete — July 2023, games using AI-generated assets were blocked; January 2024, they were allowed with the caveat "as long as it's legal"; future policy: subject to change. Rodman's point was that you can spend three to five years building a game and find that Steam changes its position the day before you publish. From there he offered three questions every studio must answer, whether they are using a third-party AI tool, downloading a public model, or training their own: How was the training data obtained? Is copyrighted material present? Is personal data present? He used YouTube as a specific example — the terms of service prohibit using YouTube data to train AI, so a model trained on YouTube puts its users in breach of agreement regardless of whether the content itself is copyrighted.

The "MARKETING BULLSHIT!" slide was directed at voice cloning services that license an actor's voice fingerprint for the output while their foundation model was trained on 100,000 scraped voices. The claim that getting the actor's permission for the last mile makes the whole chain ethical is, in Rodman's words, wrong. "The only way you can do clone voices ethically is if you had a license to the entire data set that the foundation model is trained on." Lingotion's response was direct actor agreements, a foundation model trained on only that licensed data, and a 30% revenue share with the actors who supplied the training data. Actors also set their own portrayal controls (four levels from Liberal to Approve Case-by-case) determining what kinds of games and situations their AI clone can appear in.

This was a sponsor session and Rodman has a product to sell. But the legal framework he laid out applies beyond Lingotion's specific use case. The grey area around AI training data is real and still growing, and the shape of how it eventually resolves is probably familiar. The music streaming industry went through a similar sequence. Early services operated in legally uncertain territory, the disputes accumulated, and licensing frameworks emerged. Something similar is ahead for generative AI. Studios using tools built on legally uncertain training data are taking on risk they may not have fully priced in. How that resolves (through litigation, platform policy, or new licensing structures) is still open. The three questions Rodman offered are a reasonable starting point for any developer trying to understand their current exposure.

Honing the Blade: Evolving Combat for Ghost of Yōtei

Session: Wednesday, March 11 | 4:30pm – 5:30pm | Design / Game & Production Technology

Speaker: Theodore "Ted" Fishman (Sucker Punch Productions)

The most useful design sessions at GDC are the ones that explain the thinking before the decision, not just the outcome. Ted Fishman, Lead Combat Designer at Sucker Punch Productions, gave one of those. The subject was how Sucker Punch approached building the combat system for Ghost of Yōtei — specifically, how a team that had already shipped a well-loved combat system figured out what to keep, what to change, and how far to push it.

The starting point was something Fishman called "retroactive pillars." Before any design work on Yōtei, the team did an honest post-mortem of Ghost of Tsushima: not the original design intent, but what the game actually delivered. Four pillars emerged: Lethality, Mastery, Fluidity, and Cinematic Duels. None were planned before development; they are the accurate description of what shipped. The value of naming them after the fact is precision: you can only protect something you can describe. Every Yōtei decision was made in relation to these four.

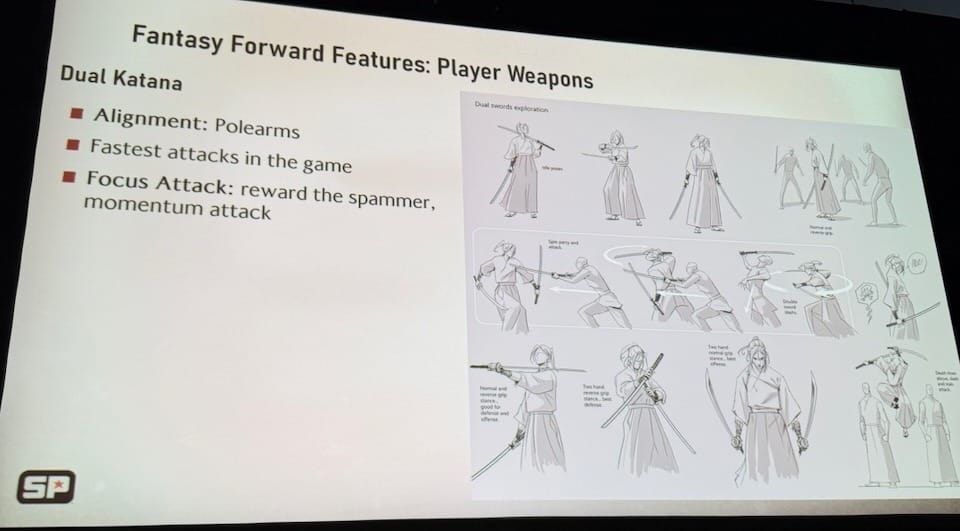

From there Fishman introduced the 70/30 framework. Seventy percent of Yōtei's combat retains what worked in Tsushima. The thirty percent is new, but with a strict constraint: it must serve the core player fantasy. For Yōtei, that fantasy is the Wandering Ronin: a journey of mastery, working toward killing the Yōtei 6. Anything that doesn't reinforce that gets cut. Fishman showed the full history of each new feature, including what was tried and why directions were abandoned. Environmental stamina systems were cut. Stamina-based disarming was cut because "it punished bad players, and good players never saw it." A weapon pickup wildcard was cut because it broke fluidity. What survived was five permanent weapons (Katana, Dual Katana, Yari, Kusarigama, Odachi), each with a distinct identity. The design goal Fishman kept returning to (borrowed from the Book of Five Rings by Miyamoto Musashi) was "situational improvization": the player should always have options, read the situation, and react.

The section on enemy variety was the most technically interesting part of the talk. Ghost of Tsushima used a single-interrupt parry: one successful parry ended a combo, which capped how varied enemies could be, because the player could always exit a sequence early. For Yōtei, the team replaced it with consecutive parries: the player must parry through a full sequence rather than interrupt it. This one change unlocked 101 enemy variants without inflating hit points or damage numbers. Difficulty comes from wider attack timing windows and longer sequences, not bigger numbers. The design philosophy was to create the perception of variety through skill rather than stat scaling.

Boss design pushed the same principle further. Each of the Yōtei 6 is built around a curveball, a moment where the player's established mastery is deliberately challenged. Dragon is a surprise "2v1." Kitsune uses smoke bombs and swaps weapons mid-phase. The super boss Takezo exists specifically to stress test both the system and the player. The conclusion slide summarized the whole approach in four points: focus on the core player fantasy, base new features on existing tropes, reevaluate core mechanics when they block unrealized potential, and treat bosses and end-game as the place for novelty.

Sucker Punch is not a small studio, and Ghost of Yōtei had one of the stronger presences of any developer across the week: multiple sessions, real development content, speakers willing to show the full history of decisions including the ones that didn't work. Studios at this scale showing up to GDC with genuine developer content is becoming less common, and it was appreciated.

One honest note: I have not played either Ghost of Tsushima or Ghost of Yōtei. I made the mistake of buying an Xbox Series X during COVID, which put both games permanently out of reach. Fishman's talk was clear enough that I could follow and appreciate the design decisions without having played the games, but I am aware that I am missing context that a player would have. I am hoping a Steam Machine arrives someday—in a world where RAM prices return to something reasonable—so I can finally catch up on both.

GDC Keynote: An Odyssey in Building Games That Last

Session: Thursday, March 12 | 9:00am – 9:50am | Design / Team Leadership

Speaker: Rob Pardo (Bonfire Studios)

I had heard that this keynote slot was originally scheduled for Hideo Kojima, who withdrew not long before the conference. I was lucky enough to be sitting in the reserved section (round tables at the front, given out on a first-come, first-served basis with no prior announcement). I got there early enough with coffee in hand, found a seat, and realized that Jenny Jiao, who had just presented the Seumas McNally Grand Prize (as the winner of the last year) at the Independent Games Festival Awards the night before, was sitting right in front of me. It was a good morning.

Rob Pardo is the founder of Bonfire Studios and the former Chief Creative Officer of Blizzard Entertainment. He spent roughly seventeen years at Blizzard, leading design on StarCraft, Warcraft III, and World of Warcraft, and shipping Hearthstone on his way out the door. In 2006, Time Magazine named him one of the 100 most influential people in the world. His new studio's debut game, Arkheron, just ran a public playtest during Steam Next Fest and is launching later this year. The talk was structured as a series of lessons learned: from the successes, and more honestly, from the failures.

The sharpest moment came early. Pardo put up a slide showing the logos of Animal Crossing and World of Warcraft connected by a plus sign, with a bag of money as the result. This is the investor pitch logic for "forever games": combine the best elements of two beloved titles and you get a massive business. Pardo's response: "If you handed me a team today, I'm not even sure I could authentically recreate Animal Crossing, let alone the best parts of it combined with another iconic game." He used his own Project Titan as the proof. Titan was intended to be Blizzard's next big franchise, one that would eventually replace World of Warcraft. It never shipped. Pardo takes personal responsibility for it: "I made every mistake you can possibly make running a large game project." He built technology before the team knew what the game was. He pushed for innovation in every direction simultaneously. He let the team scale before they had found core fun. He held the Game Director title while doing a much larger job, which meant neither role got enough attention. "For Titan, I got to claim sole responsibility for leading the biggest project failure of Blizzard history." A small team from Titan eventually salvaged a prototype and it became Overwatch. From the wreckage came something remarkable, but that wasn't the plan, and it doesn't make the lesson less painful.

What Pardo offered in place of the formula was more honest and harder to package. The most reliable signal he knows that a game is going to be great is when the team starts extending playtests because they love playing the game more than they love making it. Not a metric. Not a milestone. Just that shift: when developers get visibly upset about a playtest being canceled, when they are more interested in playing their own game than anything else on the market. He called this the Team Love Milestone, and said it has held true across every game he has been part of that lasted. At Bonfire, it happened during the pandemic: the team had shifted to remote work overnight, daily playtests had become the glue holding them together, and somewhere in that period the game quietly became something they genuinely wanted to play. The Seed-to-Sapling process that selected Arkheron (35 ideas voted on and narrowed to 7, then ranked by a final question) was designed to put the team's genuine passion at the center of the decision, not market analysis. That final question: "Which game can Bonfire make truly legendary?"

The closing argument was the one that mattered most given where the industry is right now. Pardo addressed executives directly: if you build a game that truly endures, what you have actually built is the team that made it possible.

"Personally, I think the game team is more valuable than the game itself. So treasure that, nurture that, give them the autonomy to keep taking care of the players, because the thing that made the game special in the first place was the people who built it."

The industry has spent the past two years reducing headcount, restructuring, canceling projects, and treating teams as a cost to be optimized. Pardo's talk was a direct argument against that framing, made credible by the fact that it came from someone who has run both the successes and the failures, who knows personally what it costs when a team falls apart, and who is currently in the middle of building something new with everything he has learned.

There is an additional layer to this now. In the age of AI, a single developer or a small team can build things that would have required dozens of people a few years ago. Some studios are using that as a reason to cut. If a team was already struggling to produce, that calculation might make sense. But if a team is great, AI is a multiplier, not a replacement. Cutting a great team in this moment is giving up exactly the kind of human judgment, creative instinct, and shared passion that no tool can supply. Pardo is betting his own studio on exactly that idea. Whether Arkheron becomes a forever game is, as he said, not up to him or his team. That part belongs to players.

One-Click AI 3D Asset Engine: Tripo Supercharges Your Game Development

Session: Thursday, March 12 | 10:10am – 11:10am | Design / Machine Learning

Speakers: Simon Song (CEO), Sienna Hwang (CMO), Benny Guo (Research Scientist), Yingtian Liu (Research Scientist) — Tripo AI

The session ran at the same time as Meshy AI's talk on the same topic, which made the scheduling a small irony: two of the most directly competing tools in generative 3D, pitching to different halves of the same audience. I attended Meshy AI's session at last year's GDC, so I went with Tripo this time to see how the two compare. The products are similar enough in scope (even in UI design) that I will probably need to test both before I can say anything useful about which one is the better fit for actual game production work.

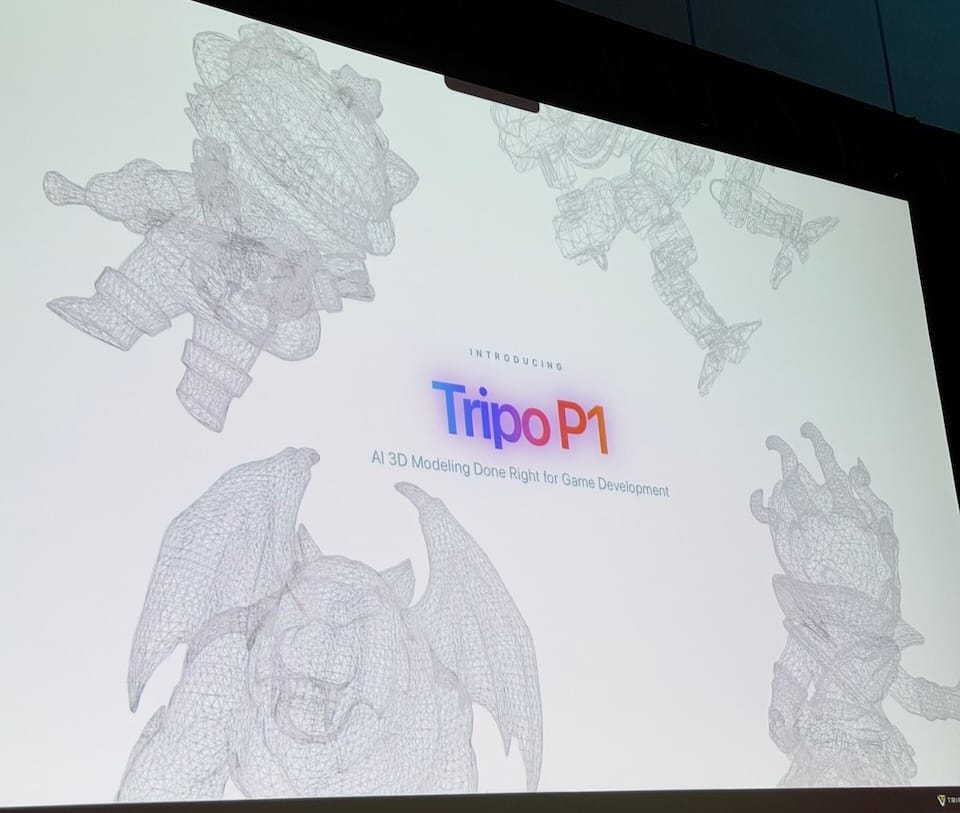

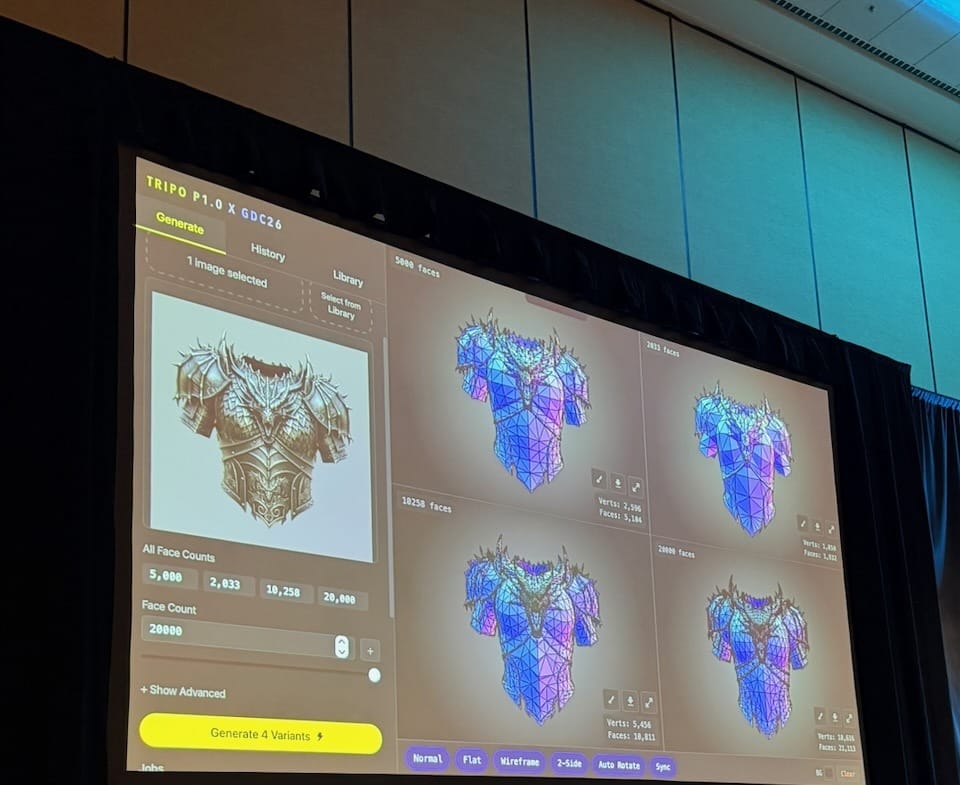

Tripo AI's research team laid out the problem clearly. Current generative 3D models behave like 3D scanners: they produce highly detailed but dense meshes that require manual sculpting, simplification, and Level of Detail (LoD) construction before they can go anywhere near a game engine. That is the first bottleneck. The second is speed—waiting for even a single asset to generate breaks creative flow, making iteration feel expensive before it starts. Their stated answer to both is Tripo P1, announced at the session.

P1 generates clean-topology meshes in 2 to 10 seconds, with face count controlled at generation time (500 to 20,000 faces). The live demo made this concrete: they took a piece of dark fantasy armor concept art, generated a mesh, and immediately showed four LoD variants at 5,000 / 2,000 / 10,000 / 20,000 faces side by side. From one input image to four engine-ready assets in a few seconds. They then ran batch generation: 12 game characters at once, 7 weapons in one pass. Whether the topology holds up in production use is a question that can only be answered by actually using it, but the demo was convincing as a demonstration of what is now technically possible.

I am paying close attention to this because I am currently working on reviving a 3D avatar system from one of my older games as a personal hobby project. The ability to go from a concept image to an engine-ready mesh without manual retopology would close the last gap in that pipeline. Tripo and Meshy AI are the two most visible tools in this space right now. There are also other competitors — Hyper3D and Tencent's Hunyuan 3D among them. It is worth noting that all of these are from Chinese companies, which raises questions that the session did not address: who owns the training data, what rights apply to generated output, and what liability a developer takes on by using these tools in a commercial project, especially in the US market. The SAG-AFTRA Interactive Media Agreement session earlier in the week covered the performer and IP dimensions of AI-generated content in detail. The same framework applies here, just applied to geometry and texture rather than voice and likeness. Anyone planning to use generative 3D in a real production pipeline should think through those questions before the assets get too far in.

Why Games Fail to Scale: Stop Optimizing CPI and Start Fixing the Game

Session: Thursday, March 12 | 11:50am – 12:10pm | Business Strategy

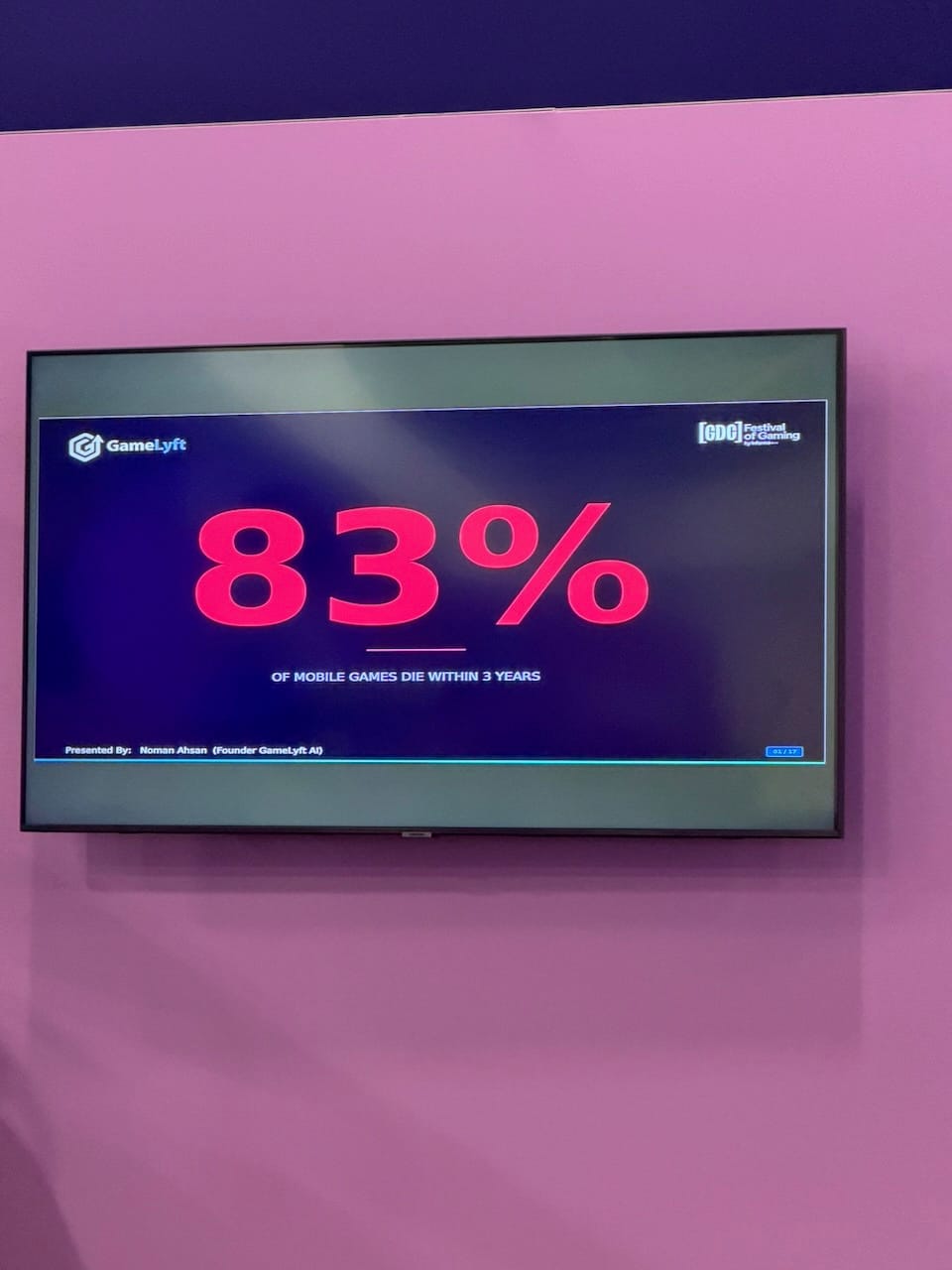

Speaker: Noman Ahsan (GameLyft AI)

This was a 20-minute sponsor Power Talk at the Monetization & Player Engagement lounge stage—closer to a product demonstration than a conference session. But the title earned my interest, and the framing behind it is worth taking seriously. The market data slide made the case quickly: gaming Cost Per Install (CPI) is up 30%, global downloads are down 7%, but time spent in games went up 8% and sessions jumped 12%. Players are still playing. The problem is not that games cannot find an audience. The problem is what happens after the install. "Your game is leaking value, and nobody on the team can see where."

The most honest moment in the talk was a postmortem story. A project was killed after burning through half a million dollars. In the post-mortem, the UA lead blamed the game designer for making a boring game, and the game designer blamed UA for buying low-quality traffic. Ahsan's read: they were both right. The game had engagement problems and the traffic was mismatched, but the two teams were looking at separate dashboards and had no shared picture of the situation. "They were both right. They just didn't have the same map." That breakdown between product, monetization, and user acquisition teams is what GameLyft AI is built to address.

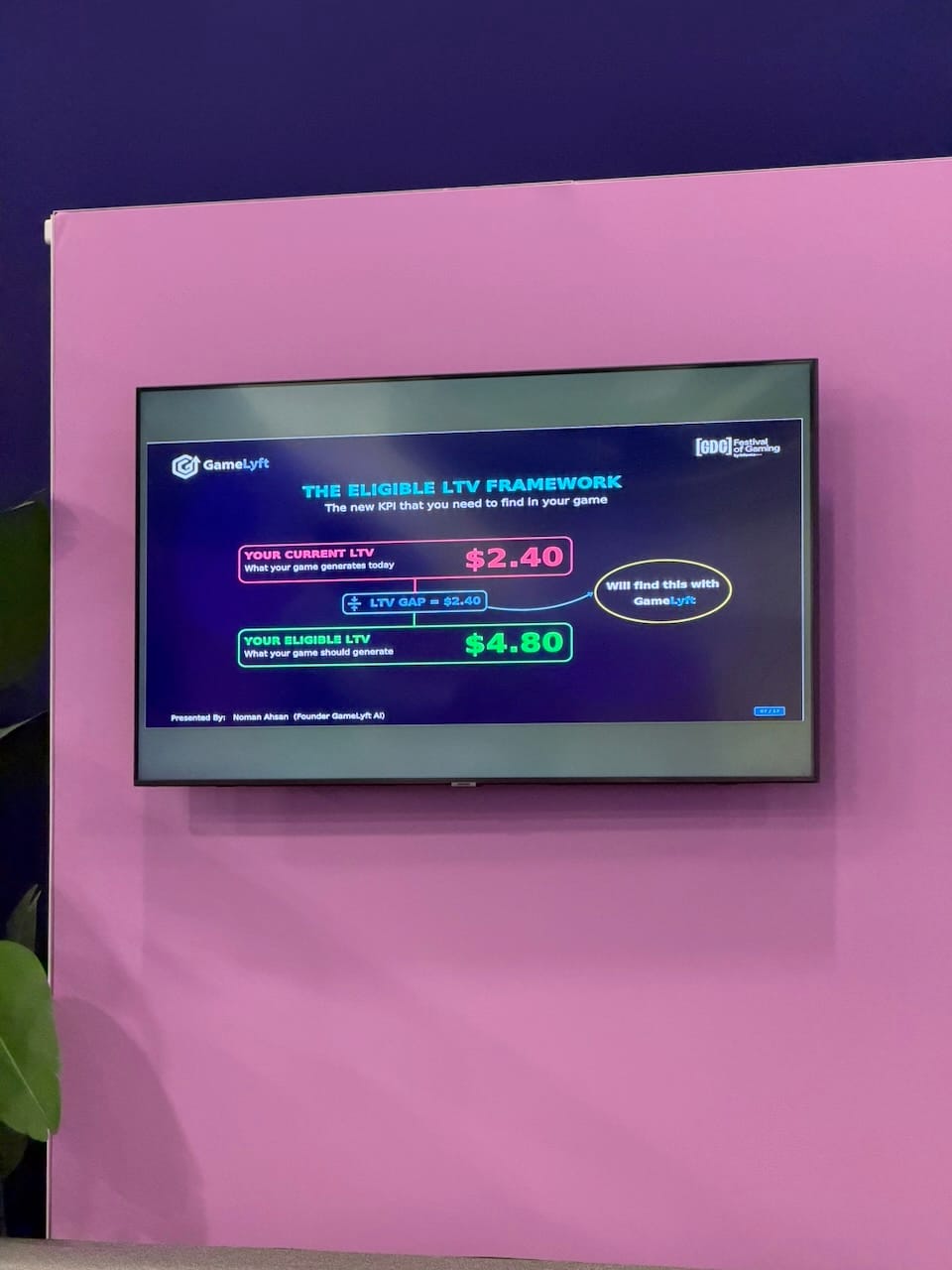

The core concept Ahsan introduced is what he calls Eligible LTV (Lifetime Value). Every game has the LTV it actually generates per user, and the LTV it should be generating based on real benchmarks from comparable games in the same genre, market, and monetization model (though I'm not 100% sure this is feasible, but it could be a benchmark). The gap between those two numbers is where the money is hiding. The example on the slide was blunt: a game generating roughly half its eligible LTV — and nobody in the studio was tracking the gap. The framework unifies product, monetization, and UA into a single score rather than three separate dashboards, and then ranks the fixes by potential revenue impact so teams know what to work on first.

I have been working in the online advertising industry since I left the game industry, and my day-to-day work is optimizing campaigns against advertiser KPIs. The thing you see clearly from that side of the table is that there is a ceiling above which no amount of campaign optimization can push the numbers, and that ceiling is set by the quality of the product itself. A game with a retention problem, a poorly timed first purchase offer, or a difficulty spike at the wrong level will underperform no matter how well the UA is bought. The talk was a product pitch, but the underlying point is real, and it applies equally whether you are looking at it from the studio side or from the ad side. Helping advertisers understand that boundary—and helping them find it before they burn the budget—is something I think about in my own work as well.

Artists, Do You Want to Fight for Your Future?

Session: Thursday, March 12 | 1:50pm – 2:50pm | Visual Development / Culture & Sustainability

Speaker: Andrew Maximov (ImagineMore.Art)

Andrew Maximov opened with a Milton Friedman line: "In times of crisis, the solutions are picked from stories lying around." His observation was that artists currently have no story worth building toward. Every conversation he has had over the past year and a half has been fundamentally pessimistic—not about whether AI is good or bad, but about whether there is any future in which artists have creative agency and economic security at the same time. The talk set out to replace the three narratives circulating in the industry (AI will never be as good; AI is unethical; AI will replace everyone) with something more honest and more useful.

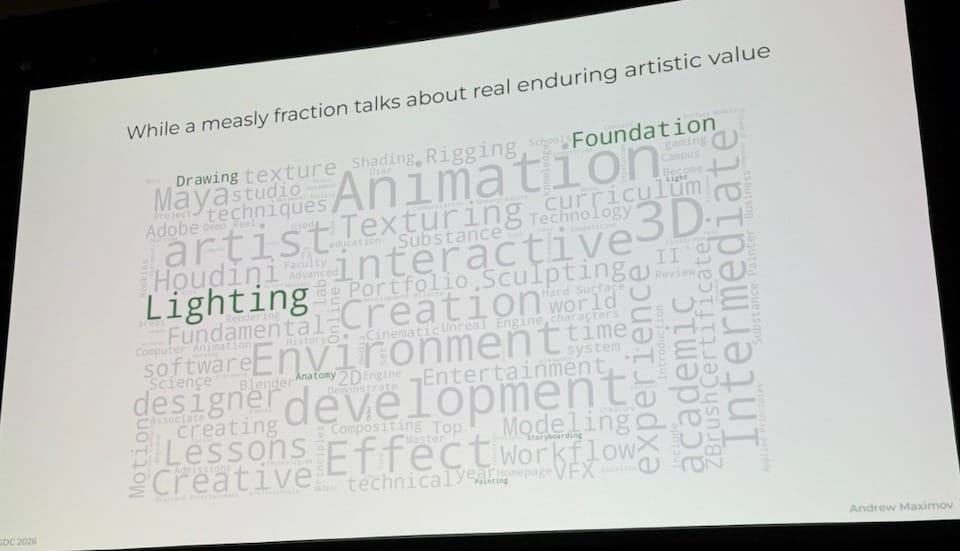

The most striking part of the talk was a slide that came from an analysis he ran on art school websites and online communities. He wrote a script to count the words used across these spaces and projected the result as a word cloud. The dominant terms: Maya, Blender, Substance, ZBrush, rigging, texturing, workflow, pipeline. Barely present: light, color, composition, cinematography, art history. "I couldn't find a space on the internet where artists just geek out on lighting and Renaissance paintings. ... And so that was the heartbreak and realization for me." The argument was not that tools are unimportant, but that the industry has trained generations of artists to optimize the thing that is most replaceable (technical implementation) while neglecting the thing that actually creates lasting value.

The pivot came from an unexpected direction. Maximov runs a software company, and when his engineering team adopted AI coding tools, the initial reaction was familiar: "If I didn't write it, it's not my work." What actually happened was that velocity went up 3 to 4 times, code quality improved, and engineers learned faster because they were exposed to more systems and projects than before. The insight that followed was the sharpest line in the entire session: "The skills necessary to be a good engineer with AI are the same skills necessary to effectively work in a team, delegate, and direct." The engineers who thrived stopped protecting their individual technical silo and started focusing on the user and the product. The ones who struggled were the ones who had defined their value entirely through their tools.

The application to artists is direct. Art implementation (the tools, the techniques, the buttons) is what AI replaces. Art direction (the taste, the intent, the judgment about what to make and why) is what survives. As an engineer, I have found exactly the same thing in my own work. The question that used to matter was which tools you knew. The question that matters now is whether you know what you want to make, and whether you can direct the process toward that goal regardless of what is doing the work. That shift is not loss of craft. It is craft at a higher level. Maximov's closing slide put it plainly:

"It's a story where artists gain resilience by getting better at what we love."

The thing worth practicing is not the next version of the software. It is the judgment that no software has.

From Developer to Publisher: The Benefits of a Japanese Studio's Decision to Pursue Small-Scale Projects

Session: Thursday, March 12 | 3:10pm – 4:10pm | Independent Development / Business Strategy

Speakers: Taichiro Miyazaki, Mimmy Shen (CyberConnect2 Co., Ltd.)

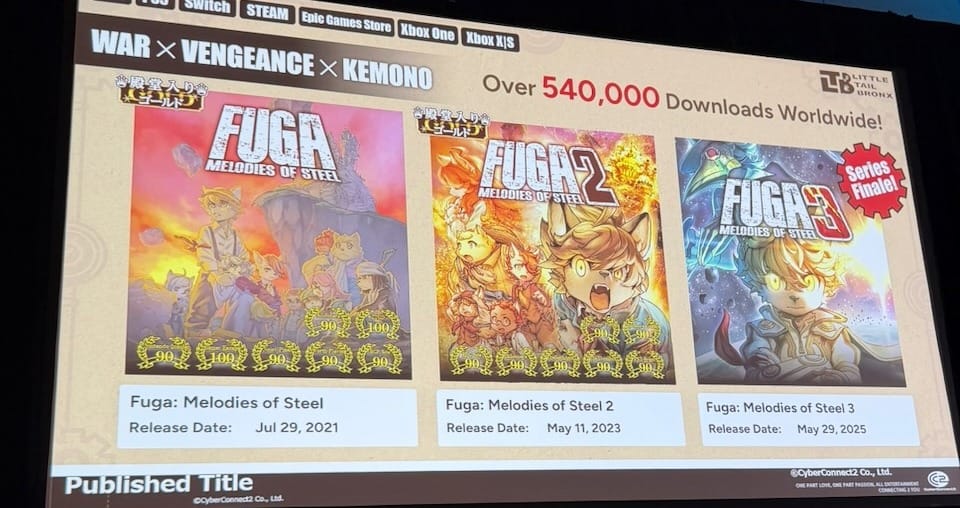

CyberConnect2 is a 30-year-old studio based in Fukuoka, Japan, best known as the developer behind the Naruto and .hack series. They are not an independent studio by background—they are a contract developer with 300 staff and a long history of handing finished games to publishers. In 2016, they made an unusual decision: to self-publish a small original game, not primarily to grow revenue, but to give junior developers a chance to ship. In the modern game industry, where projects take five or more years from start to release, a new hire can spend their entire early career contributing to a project that ships after they might have left, or never ships at all. The C5 internal competition was CyberConnect2's answer to that problem. The winning pitch became Fuga: Melodies of Steel.

The session covered three episodes of that journey. The first was production: Fuga was planned as an 18-month project with a small team, and it took three years. The company's instinct to "let them fail" was right in spirit but went too far in practice—veteran support existed but was informal, not structural. The fix was to assign a veteran director, establish section leads for each discipline, and build a dedicated in-house programmer team so technical decisions did not bottleneck the director. "Letting new developers take the helm didn't mean abandoning them. It meant making sure there was support to catch them when they fell."

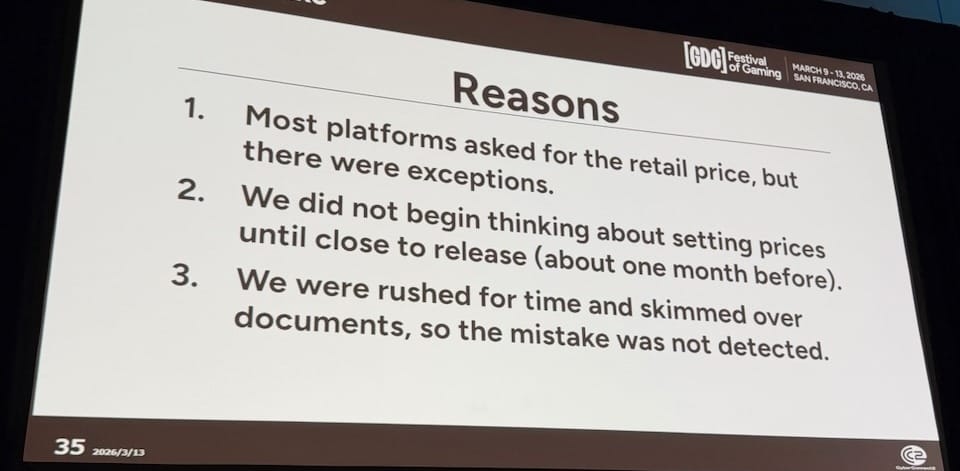

The second episode was publishing, which turned out to be an entirely different discipline. A company that had always handed off to a publisher had never ordered QA dev kits, never navigated the differences between rating applications across regions and languages, and had started thinking about price setting one month before launch.

The pricing section was the most specific moment in the talk. The team discovered on launch day that the game had shipped at the wrong price on certain platforms. The cause was not carelessness so much as inexperience with the process: not all platforms ask for prices in the same way, pricing was treated as an afterthought rather than a project milestone, and the documentation was skimmed under time pressure. Beyond that immediate mistake, there was a deeper lesson about international pricing. Converting a yen price to other currencies by exchange rate produces numbers that do not reflect the actual value of a game in those markets. CyberConnect2 released in 80 countries simultaneously and had to research what a game of comparable scale and genre actually sells for in each region — what they called a game price index. The Big Mac Index analogy landed well in the room.

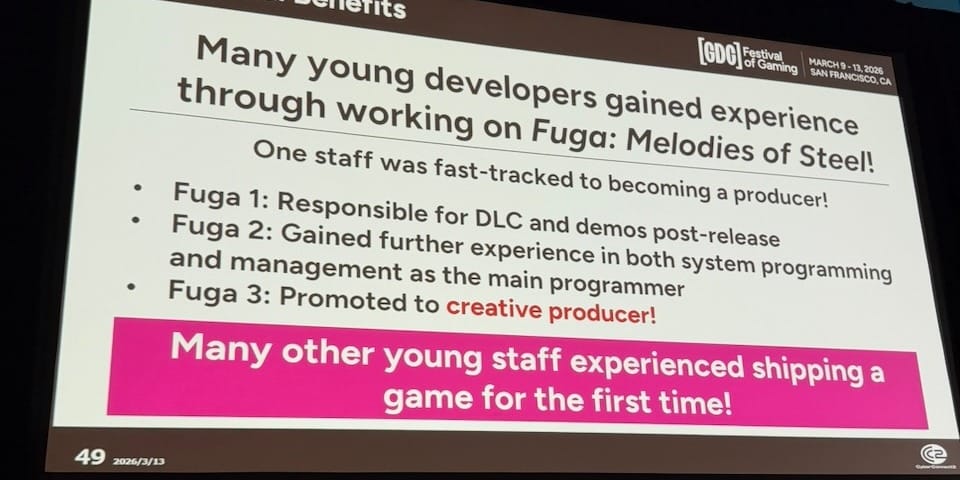

What the project actually delivered, in the end, was exactly what it set out to do. One programmer was fast-tracked through all three games in the trilogy and promoted to creative producer by the time Fuga 3 shipped in 2025. Many other junior staff shipped a commercial game before their fifth year in the industry. The trilogy reached over half a million downloads worldwide without a single yen of paid advertising—built entirely on patches, user feedback, and word of mouth.

This was not the most technically dense session of the week. But it was one of the ones I am most glad I attended. I am not aware of many studios (in Japan or in the US) that have built a formal internal system specifically to give junior developers the experience of shipping a game. Most studios treat that problem as a staffing constraint or a development process question, not as something worth designing a whole project around. And the session itself was a reminder of something GDC sometimes forgets: the conference is more valuable when international studios show up and share their specific, honest experience, even when the lessons seem basic. A developer who has never done publishing before discovering that QA dev kits have to be ordered, or that rating applications are in languages you do not speak—that is useful information for anyone considering the same path. I would like to see more sessions like this one. GDC may have space for deeper investment in lighter formats: poster sessions, hot-talk slots, short experience-sharing talks from studios that would not normally submit a full hour. CyberConnect2 came from Fukuoka to present a careful, honest account of a project most Western developers would not know about. That is worth the room.

From a Naughty Dog to a Wildflower: The Fears, Failures, and Freedoms Found

Session: Thursday, March 12 | 4:30pm – 5:30pm | Independent Development

Speaker: Bruce Straley (Wildflower Interactive)

I walked into this session by accident, following the path toward the GDC Awards. The Festival Stage in South Hall sits in the middle of the floor, open seating, no walls, the hum of the convention around you. Bruce Straley spent the better part of two decades at Naughty Dog, where he co-directed Uncharted 2, Uncharted 4, and The Last of Us. He left in 2017, took some lunches, worked on some odd projects, and eventually started prototyping with a former colleague on something he had been thinking about since playing Ico: a game built around what he called "the playable relationship"—a companion whose emotional state responds to how you actually treat them, not a scripted arc. That became Coven of the Chicken Foot, a game about a little old lady named Gert and a creature named Buddy. Wildflower Interactive formed in 2021.

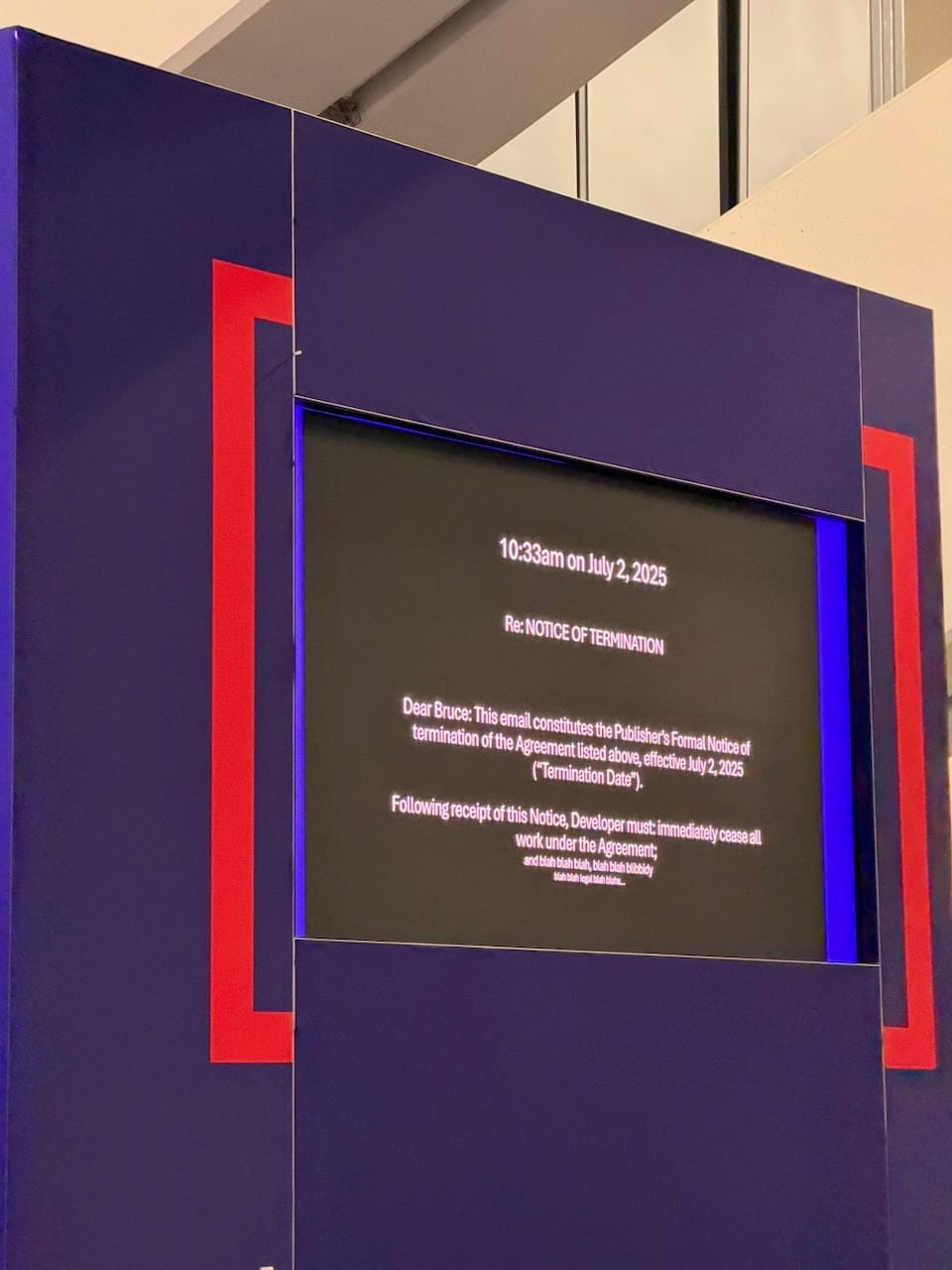

The talk moved quickly through the years between there and here. The early part covered what he wanted to build—a small, scrappy studio with the culture of early Naughty Dog but less of the weight: lean, in-game always, people over process. The middle covered everything that did not go as planned. COVID took away his pacing-the-floor direction style. Quarterly publisher milestones consumed more time preparing and reviewing than actually making the game. A working prototype was thrown out in favor of a more ambitious companion system that became a science project. Staff left for AAA pay. A year and a half was spent renegotiating contract terms. Then the slide: a publisher email timestamped 10:33am on July 2, 2025.

He read it on stage. The publisher had terminated for convenience, not cause—a distinction that mattered because a kill clause in the contract gave the studio some financial runway. He thought about his options: fight to keep everyone and find new funding, rescope, or close the company and take the money. He chose rescope. Three months of sorting through code left behind by people who had moved on, of eroded trust and uncertainty. Then a programmer looked at a problem that had seemed impossible and said: "It's just a knob." He took that as the sign the team had come through. A trailer went out. The response was good. They are still making the game.

The talk landed differently for me because of where it sat in the day. The morning sessions had covered AI tools, generative assets, and the question of what skills actually matter when the technology is changing this fast. Straley's talk came from the opposite direction and arrived at the same place (and also connected to the keynote): the thing that does not go away is the willingness to make something and put yourself behind it. His closing line was honest and a little rough and exactly right: "Stick your chest out. Be like, fuck it. Let's try." Sessions like this one—a veteran stepping back from the craft and talking plainly about what happened and why they kept going—are some of the most valuable hours at GDC. I would like to see more of them.

'Genshin Impact' Miliastra Wonderland: Co-Creating a Virtual World with Users

Session: Friday, March 13 | 9:30am – 10:30am | Design / Game & Production Technology

Speaker: Xin Ning (miHoYo), presenting on behalf of Siyu Li (miHoYo)

I do not play Genshin Impact, but I have always had some respect for what miHoYo built. When Genshin launched in 2020, it was the game I had imagined but never made: anime-style subculture aesthetics combined with the open-world exploration language of Zelda, delivered free to a global audience and sustained through live service over years. This session was about where that game is going next. Miliastra Wonderland is Genshin's User Generated Content (UGC) module, launched in October 2025 after two years of development. Of all the sessions I attended this week, this was the most product-ready presentation: not research, not a prototype, but a shipped system with real users and real results already in hand.

The motivation was practical. When miHoYo released the Sumeru region in 2022 (massive even by Genshin's standards) they realized they had hit a ceiling. A team of over 1,000 people could not keep doubling its output indefinitely. At the same time, the game's fan community was already producing a continuous stream of artwork, streams, and videos. The question was whether that energy could be redirected from fan content into the game itself. The framing they used: miHoYo's stated vision is a virtual world for 1 billion users by 2030. "If you use UGC to convert more users into creators rather than simply recruiting them, we will get closer to this goal."

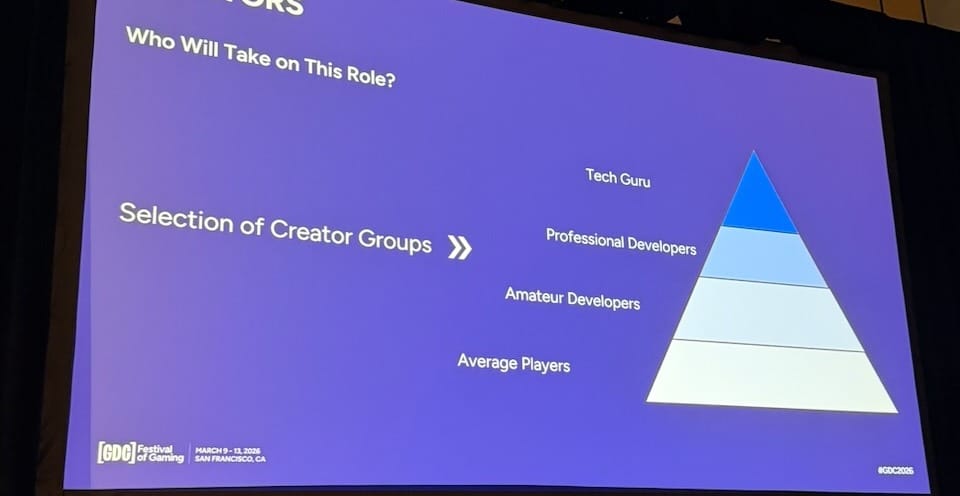

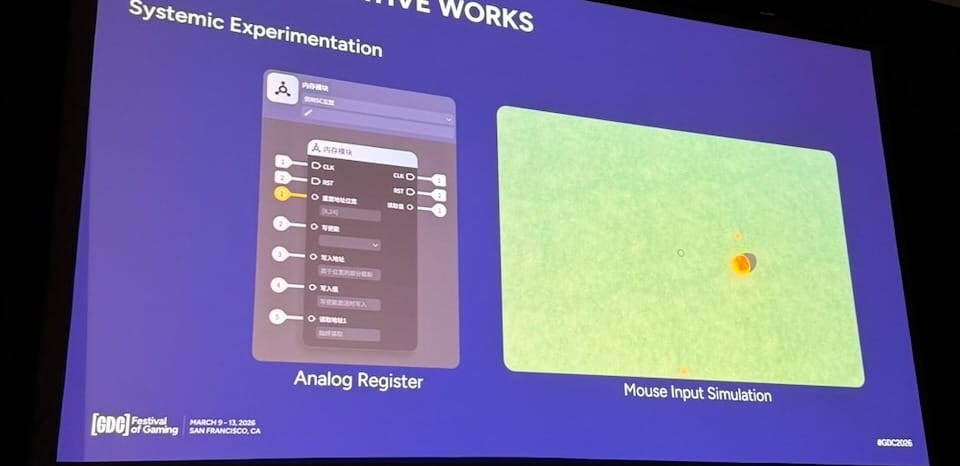

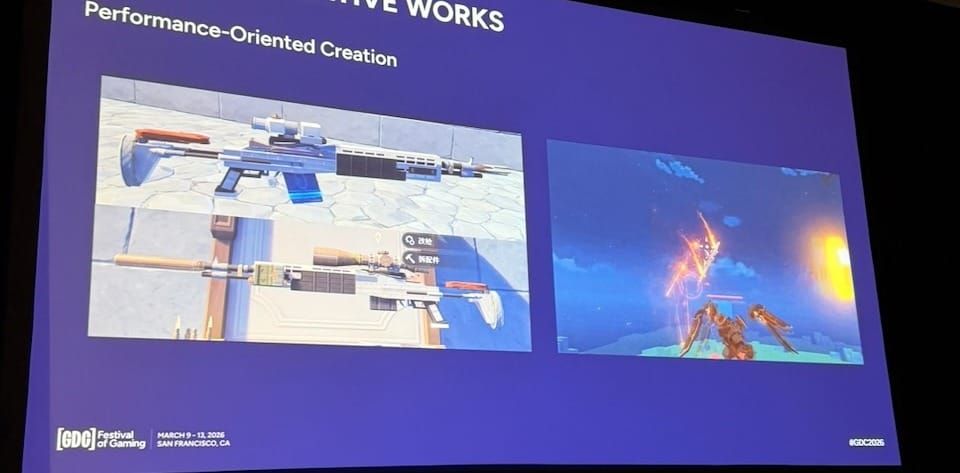

The platform is structured around three groups: creators, content, and participants. On the creator side, they defined four capability tiers: Tech Guru, Professional Developers, Amateur Developers, and Average Players, and deliberately set their target bar at the amateur developer level. The goal was not to serve only power users, but to help average players grow into amateur developers. To support this, they built a Craftsman Academy (video tutorials, comprehensive guides, real-time error logs), chose visual node editing over scripting to lower the learning curve, and introduced financial rewards tied to stage popularity through what they call the Bounty of Divine Ingenuity plan. For more advanced sharing, a Wonderland Resource Center lets creators publish stage files and assets for others to reuse—their analogy was open-source code.