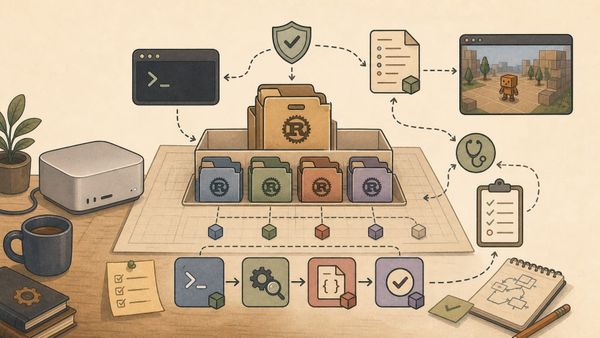

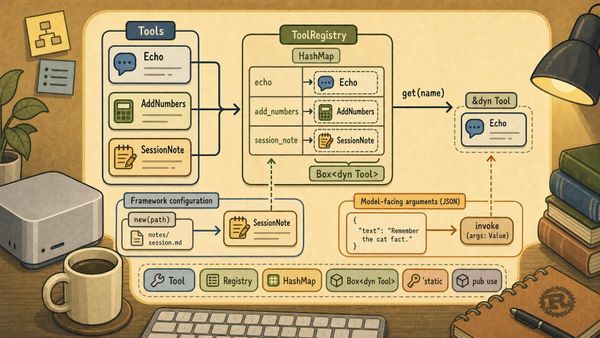

Building a Local-First Agent Framework in Rust (Part 6): Tools and the Registry

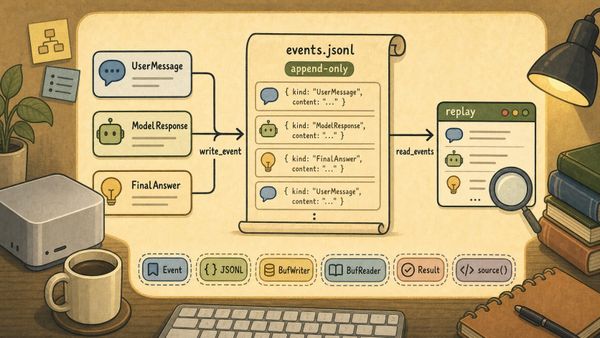

See Part 0 for the latest table of contents and sample code. New chapters will be added over time. Chapter 6: Tools and the Registry Chapter 5 gave abcb a way to record what happened. That was not a feature that made the model smarter, but it changed the framework&